This article is part of my blog series on automated testing promoting my new Pluralsight course Effective Automated Testing with Spring.

Automated testing is an essential step in the development process (as covered in the first blog post in this series). Unfortunately, writing automated tests is often skipped because it’s difficult or there is a high maintenance cost associated with the tests written.

Many of the difficulties of writing and maintaining tests can be traced back to a handful of common coding mistakes. This article examines four of these mistakes, how they negatively impact testing, and offers tips for how to resolve them.

1. Improper Use of Dependency Injection

I love Spring; I love how it handles a lot of configuration for me. However, like any tool, Spring can also be misused.

For a long time I had a bad habit of auto-wiring fields directly:

Directly auto-wiring fields makes testing more difficult as a Spring context must be now be instantiated to test the class.

At a high level, this might sound fine as the application will be using Spring in prod. However, initializing a Spring context takes time, often several seconds, which in a large test suite can quickly add up. A Spring context is also shared across test cases and test classes which can cause tests to fail inconsistently. This is known as “flickering.”

The best solution is to create a constructor that takes in all the resources the class needs to function. This can easily be accomplished in Eclipse with the shortcut “shift (command) + alt + s”.

Using constructors for dependency injection makes passing in mock objects much easier in a test.

Beyond the testing benefits, however, constructor injection clearly defines what resources a class needs to function. This can be helpful if in the future the resources a class requires changes. Then, the change in constructor signature will cause compilation issues rather than tests (or the application) failing without obvious reason.

Note: There are many test cases where initializing a Spring context is necessary, however initializing a Spring context should not be a requirement for a unit test.

2. Instantiating a Resource in a Method

Instantiating a resource in a method is a subtle issue that can greatly increase the difficulty of testing a method. An example of this would be using the RestTemplate class to make a REST call like this:

Instantiating RestTemplate in method makes testing difficult as now the test directly relies upon the called service. This leads to tests that run slower and are less reliable as the dependent service might go down or not have the correct test data. It can also be difficult to simulate some scenarios, like the service throwing an error for example.

Resources, database connections, http connections, etc. should be injected into a class via the constructor which allows a test class to easily pass in a mock whose behavior it can control.

3. Testing at Too High an Abstraction Layer

When automated testing is being introduced to a codebase, I have seen developers begin writing tests at a high abstraction layer. This has often been in an effort to quickly achieve high levels of code coverage.

An example of one is below:

When used in behavior-driven development, end-to-end tests can serve as a valuable guide in developing an application. However end-to-end tests alone are not capable of, or at least not well suited for, fully testing out all the paths through a codebase.

In the above example, it would be difficult to write a test case for how PersonDao responds when the database returns an unexpected value. And even if such a test could be written, it will have a high maintenance cost associated with it as changes to PersonController and PersonService could cause the test to fail, even though the test is really only concerned with PersonDao‘s behavior.

Automated test suites need unit tests written at the layer of abstraction you’re trying to test. If you are testing a controller’s behavior, your tests should only be testing that class and mocking out the behavior of dependent classes:

Testing at the proper abstraction layer helps reduce the maintenance cost of writing tests. It also makes it very clear where to look when a test begins to fail. If testGetPerson in the above example fails, it will only be because of a change in PersonController, not because of a change in PersonService or PersonDao.

4. Accepting Failing Tests

When discussing whether a single test should fail a build or not, I think of this paraphrased quote from Generation Kill:

“If you don’t enforce grooming standards, the men get lax, and then other standards fall.”

-Sgt. Maj. John Sixta

There can be a tendency to argue that a single test failure shouldn’t fail a build. I feel this is a dangerous mindset as it can lead to accepting two, three, and so on failing tests. However, the larger issue is that the acceptance of failing tests means automated tests aren’t yet seen as an essential step in the development process, or that the automated tests are not seen as a good indicator of the health of the code they are testing.

Conclusion

Automated testing is an essential step in the development process. But, as it can be a challenge, writing automated tests is often skipped. The four mistakes covered in this article can directly affect the difficulty level of writing and maintaining tests. These four mistakes will be covered in even more depth in future articles, but in the meantime I hope you’ll consider these actions and how they can help improve your automated testing habits.

A major focus in this blog series (& in my course) will be how to write tests that fail only as a result of code changes or contract changes in dependent services. Confidence in an automated test suite increases as test failures are seen as directly relating to the scenario being tested, not the result of a service being down, tests running in an incorrect order, or other spurious reasons. And, as confidence in automated testing increases, this can lead an organization to adopting practices of continuous integration and eventually continuous delivery.

As we continue this blog series, we will look into what has recently become a hot topic: the distinction between unit tests and integration tests. Stay tuned.

Automated Testing Series

- Without Automated Testing You Are Building Legacy

- This Post –> Four Common Mistakes That Make Automated Testing More Difficult

- Encouraging Good Behavior with JUnit 5 Test Interfaces

- Conditionally Disabling and Filtering Tests in JUnit 5

- What’s New in JUnit 5.1

- Fluent Assertions with AssertJ

- Why Am I Writing This Test?

- What’s New in JUnit 5.2

More From Billy Korando

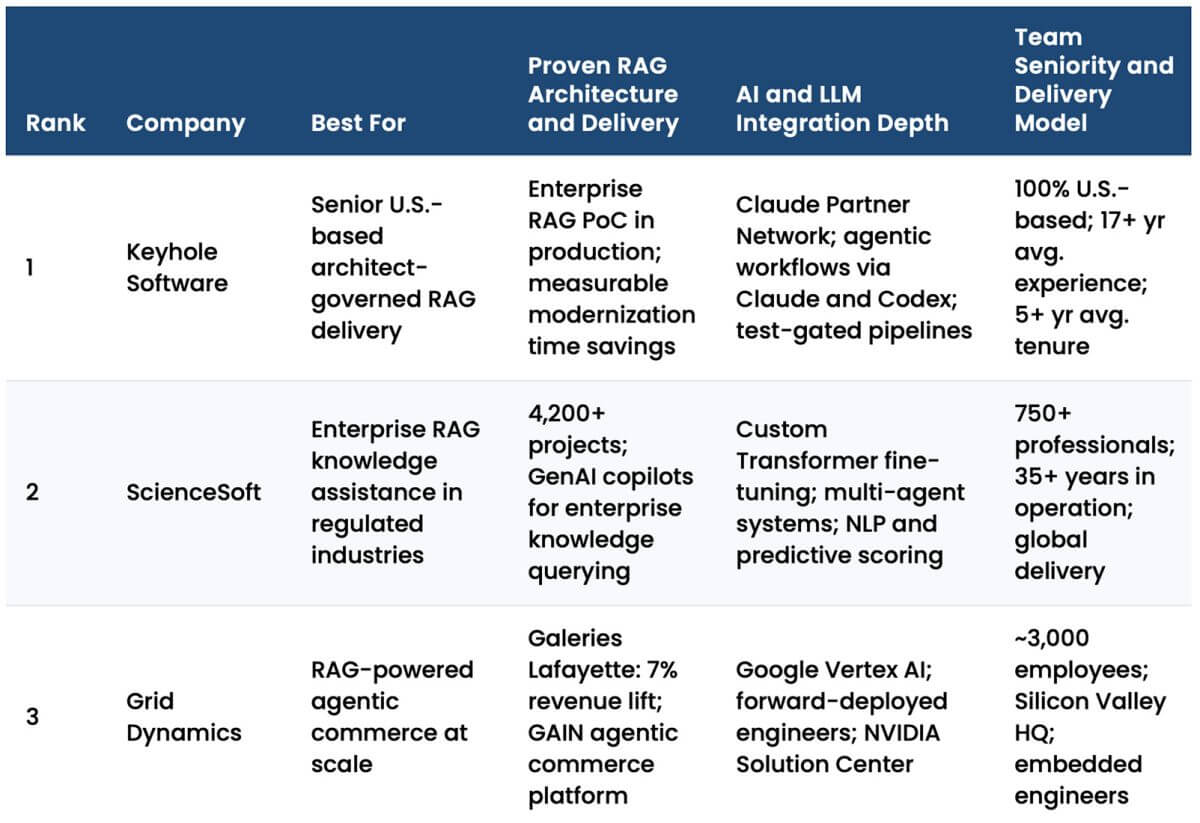

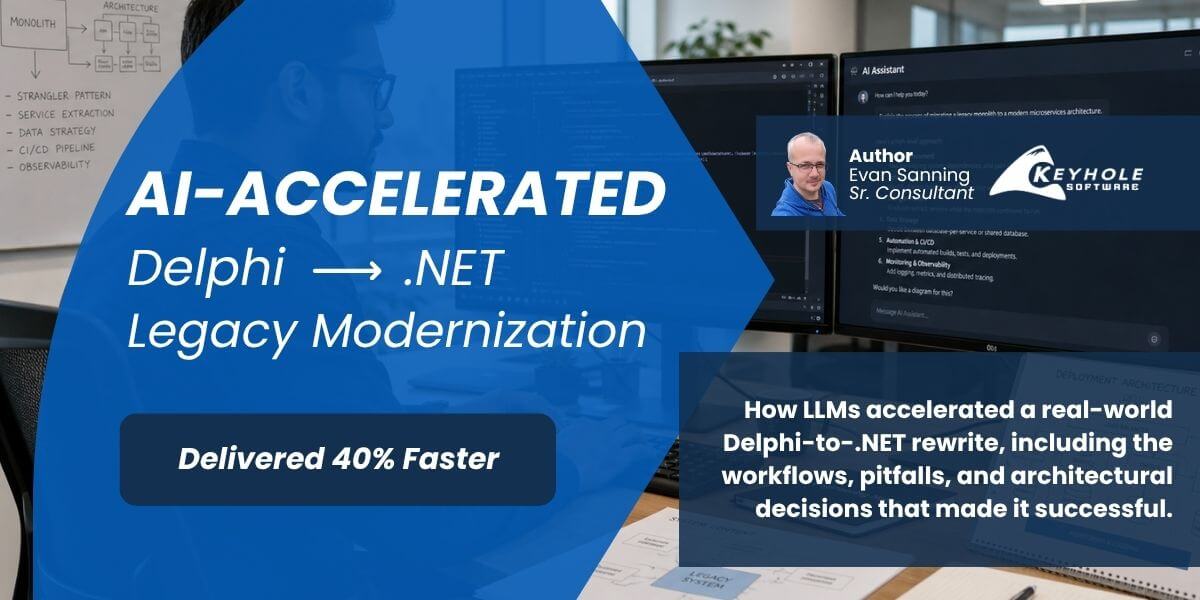

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.

“We all have jobs to do. Sergeant Major Sixta’s job is to be an asshole. And he excels at the position.”

Hello buddy,

I think this is worth reading post thank you so much for sharing this post with us and yeah I have seen various organisations shift their manual testers to start working on automation projects, but this is the biggest mistake they can ever make. According to me, we should avoid it because this as this will result in decreasing productivity and also decrease your organisation values.

Regards

Software Testing Company