How to Turn an Idea into an App: Technical Lessons from Pennies-AI (Part 1)

February 23, 2026

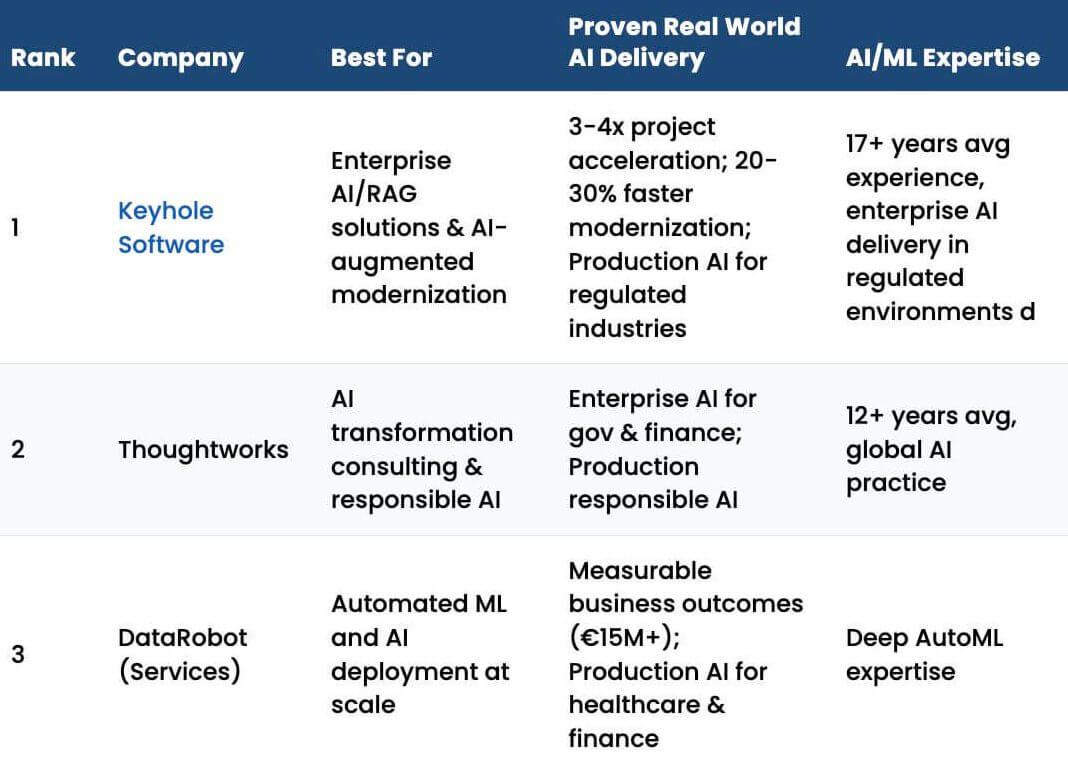

We’re all seeing the fabulous claims today about building complete websites and mobile apps in hours using AI. While these tools are impressive, as a professional developer with decades of experience, I would suggest taking those claims with multiple giant grains of salt. Building production-quality software, especially software that handles sensitive data, is still hard. Attempting to do it with no background in coding has already landed people in situations where they eventually had to reach out to professional developers, like us at Keyhole, for help.

That context matters for this story, because the project I’m about to describe didn’t start as an attempt to “build an app quickly.” It started as a way to learn a new language in a way that made sense to me. The app came organically from that experience.

This first part (out of four) focuses on the technical foundation of Pennies-AI: how the idea formed, how the stack evolved, and why many features that look simple to users are anything but simple to implement correctly. Without further ado, let’s dive in.

The Why Behind Pennies-AI, Explained

Back in 2024 (before agentic AI had really taken off), I was tasked with learning a new language, Python, to help with a project that was underway. Due to the nature of the work, I needed to learn it and become productive quickly.

Learning by Doing

One might think that taking a course would be the best way to learn a new language. For someone without a lot of coding experience, a course can be very useful. However, as a senior developer with many years of experience and a plethora of languages in my toolbelt, most courses tend to be repetitive. To become productive with a new language, I don’t need to relearn general programming concepts. I need to understand the syntax, language-specific nuances, order of operations, and things like numeric side effects and rounding behavior.

So, I spent a few days working through Python tutorials, learning these things. And at that point, I felt ready to put it to use.

Attempting to write production code with a brand-new language, however, is not the right way to succeed. On the other hand, the simple exercises found in typical coding courses don’t go deep enough to really show how the language will work in practice.

My favorite way around this dilemma is to choose something I want to explore and create a project to implement that idea. That project forces me to deal with real-world concerns while learning the language naturally.

Building Something Real

This is how the idea for Pennies-AI began. I wanted to create something like a stripped-down version of Quicken. I had used Quicken from the time it came out in the 1990s until 2025, and while it worked, it was frustrating to use. As an old codebase, it gets quite slow when there are thousands of rows of transactions in accounts. The connections to my financial institutions were fragile; some were not supported at all, others broke often, and only a few worked well. Searching across the data meant using the clunky reporting system, and the final straw was that the mobile app was (and is) extremely limited.

So why not just use Mint? Well, Mint was acquired by Quicken and summarily killed. I tried some other alternatives, too, but I just didn’t like them.

What started as a learning exercise quickly became my passion and a serious project. Building it forced me to stand up a server, create a client, handle precise calculations, and work my way through issues like long-lived sensitive data, high performance, secure data with “at-rest” encryption in the database. Not only did it solve a personal frustration of mine, Pennies-AI also gave me firsthand experience handling the sort of problems I would see when I started writing “real” production code with Python for my client.

With the direction set, the next step was choosing a stack and getting the project up and running.

Starting with Python and Django

I set about the tasks related to new project creation. For Python, that meant using the django-admin to create a project, which gave me the basic framework of a server and the Django client to call it. I also created a Postgres database to hold the project’s data.

I spent the next month doing the basics: registration, login, 2FA support, logout, edit/delete profile, add/edit/delete account, add/edit/delete transaction, etc. – all the while, getting better at Python and Django. Things were going pretty well during this period, though most of the UI was pretty basic.

From Demo to “This Could Be Something”

One month later, I had a working demo. It wasn’t full-featured enough to call it a product yet, but it calculated net worth and had the basics of financial management completed. I gave a demo of it at work, to which one of my co-workers exclaimed, “I need this in my life!” That was a light bulb moment for me. I realized that this could become a real product that people would enjoy using.

At one point, I then had the idea to add receipt capture with parsing. Users would take a picture, I’d send it to Azure Document Intelligence to parse, and then use that data to create a transaction, with each line separated into a split. The problem was browser support for taking pictures; it was terrible.

This gave me an “ah-ha” moment: I needed mobile apps for this venture. Python is really good at websites, but it knows nothing about mobile apps.

The Pivot: Choosing Tools That Fit the Product

I had been developing mobile apps for clients using React Native for years. I had seen React Native Web, but at that time, most codebases used one repo for React Native to implement the mobile apps and a separate one for React to implement the website. When I started looking more closely, I realized that React Native Web had matured to the point I could support both with a single codebase.

I was also tired of Django and its Djanky template engine. Sorry to any fans, but it is limited when it comes to the type of complex UI I was implementing. Python and Django tightly couple the client and server. The server receives the request, processes it, then hands it off to Django templates to generate a webpage. I’ve never been a fan of that model. I would much rather have a server that focuses on server-side responsibilities and a rich client that focuses on delivering the best possible user experience.

I could have kept using Python just for the server, but I wanted to use one language for both sides. That was Typescript.

Porting Node to React Native

With that decision made, I ported the entire codebase to Node Express and React Native. I chose the Expo React Native framework specifically because it already provided many UI elements I needed, including support for taking pictures.

It took another month, but my familiarity with both languages helped speed things along. The biggest new wrinkle was getting the UI to look good as both a website and a mobile app. Android and iOS devices come in an array of sizes and resolutions (not to mention landscape mode), so getting everything just right was (and still is) quite a challenge.

Designing with Intentional Constraints

I decided early on to just nix the whole “connect your bank to my app” type of setup and instead support importing data through bank export files and receipt capture. At first glance, this might seem counterintuitive. After all, in today’s world, convenience is king, and we regularly trade privacy and security for it.

I didn’t want my app to work that way.

Security and privacy needed to be paramount. We’ve all seen data breaches in the news; it happens! If systems like Plaid were to be compromised, I didn’t want my users’ data exposed as collateral damage. Avoiding direct bank connections became one part of a multi-pronged approach to security.

There was also a practical upside. Removing third-party systems like Plaid eliminated an entire class of ongoing costs. Lower overhead meant I could keep subscription pricing lower, without compromising on the core functionality I cared about.

Building a Data Architecture That Assumes the Worst

As mentioned in the previous section, data breaches are an all-too-common occurrence. While I put in place many layers of security, nothing today is ever 100% breach-proof. I designed my system so that if attackers were to breach my defenses and obtain any database records, the final line of defense would be strong encryption of data at rest.

The only unencrypted pieces of data available are an email address and a subscriber ID, which are required to identify a user during login or when handling subscription events. Everything else (from profiles to accounts to audit logs to transaction history records) is encrypted with strong algorithms, derived keys, and MACs (Message Authentication Codes – like a checksum that verifies the data hasn’t been tampered with).

Encryption That Doesn’t Sacrifice Performance

From the start, I’ve constructed the database schema to achieve encryption that is still performant. Even with encryption in place, over 7 million bytes of user data are typically loaded and rendered in less than three seconds. That’s not just the time to fetch data; it’s the time to decrypt, process, and display it in the account lists.

My own records serve as my baseline. I imported over 20 years’ worth of Quicken data specifically to make sure my system could handle real-world load.

Client-Side Search for Speed and Flexibility

Searching across this trove of data is lightning fast because it is all done on the client, no round-tripping to find anything. Search results are exportable to PDF, making it easy to find that tax data or how much you spent to remodel the kitchen 5 years ago.

A base set of categories is included, but they’re fully customizable; they can be added to, renamed, removed, or completely replaced, even after you have a lot of data. I recently renamed my categories from the old Quicken multi-level way, “Fixed Exp.: Medical: Premium,” to a flatter, “Medical Premium.” That change updated hundreds of transactions at once by automatically identifying and modifying all affected records. You can also search and edit individual results as needed.

The Reality Behind “Simple” Features

Let’s take a look at a feature that sounds simple on the surface, but ends up being anything but. I decided that bank import files would be better than bank connections. All of the banks I have tried (or have had my friends try) provide exports in CSV format. It didn’t take too long to get that first one to work. Parse the first line of headers to find out what the columns were, then loop and repeat through the data. Easy!

Then I tried another bank’s file: completely different headers for the same types of data. Some have one column and use positive or negative numbers for debits and credits, while others break those out to separate columns. Date/time formats can vary wildly between institutions. Some files wrap every value in double quotes, some use none at all, and others only quote fields that contain commas. And that’s just scratching the surface! The variations go on and on.

Designing for Real-World Inconsistency

Supporting this level of inconsistency meant building import logic that could adapt to almost any reasonable variation without forcing the user to manually fix files beforehand. The system now handles most bank import formats I’ve encountered, but getting there took a significant amount of work, far more than the original “just parse a CSV” assumption would suggest.

This is the reality behind features that feel simple when they’re done right: the complexity doesn’t disappear, it just gets absorbed by the system so the user never has to see it.

Wrapping Up, For Now

Building an app and a website is a significant commitment. Database design, stack selection, and architecture decisions have long-lasting consequences, and supporting both web and mobile interfaces adds another layer of complexity. Even with many years of experience building enterprise systems, wearing every hat (from engineering to operations) was challenging.

What began as a way to learn Python ultimately became a production system written in an entirely different language. The early fun of building features eventually turned into long nights debugging edge cases and refining systems to behave correctly under real-world conditions. In the end, it was worth every minute.

If I were starting today, AI and agentic tools would undoubtedly accelerate parts of the process. But good system design would still be essential. Someone still has to define what should be built, how it should behave, and where the risks lie.

Looking Ahead

In the remaining parts of this series, I’ll move beyond the foundational architecture covered here and focus on what came next: turning the system into a real product, operating it in production, and examining how newer AI tooling has influenced both the development process and the evolution of the codebase over time.

By the way, the project officially went to production on January 24th, 2026. Thanks for staying with me through this journey. There’s still a lot more to cover, and I hope you’ll tune in for Parts 2, 3, and 4.

Product: Pennies-AI

Website: https://www.pennies-ai.com/

Facebook: https://www.facebook.com/PenniesAI

YouTube Tutorials: https://www.youtube.com/@Pennies-AI

More From John Boardman

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.