Enterprise investment in Artificial Intelligence has moved from experimentation to scaled implementation, and for many organizations the next challenge is understanding what AI actually costs to build, operate, and scale.

For CTOs and engineering leaders evaluating their next budget cycle, understanding the total cost of ownership (TCO) is now a critical component of strategic planning. The gap between what organizations expect to spend and what they actually spend remains one of the most common sources of misalignment we see in delivery planning.

This report synthesizes AI cost and spending data from leading global research firms to provide a clear view of:

- AI software development cost ranges by solution type

- Total Cost of Ownership (TCO), including hidden lifecycle costs and maintenance

- Enterprise AI spending trends and budget growth trajectories

- Build-versus-buy dynamics and hybrid architectures are shaping enterprise AI adoption

- AI-accelerated delivery economics and when agentic workflows pay off vs when they fail

- Directional Return on Investment (ROI) benchmarks and common failure points between pilot and production

From October 2025 through March 2026, our research team analyzed AI spending and cost data across more than 100 enterprise organizations. We surveyed CTOs and VPs of Engineering, analyzed project delivery data, and reviewed more than 15 industry reports from organizations including Gartner, McKinsey, a16z, and Menlo Ventures. We also reference DORA’s State of AI-Assisted Software Development research and subsequent analysis.

How Keyhole Software Uses AI Cost Data

At Keyhole Software, we apply these AI cost and spending benchmarks in real delivery planning with CTOs and engineering leaders, using them to structure modernization roadmaps, evaluate build-versus-buy decisions, and assess where AI integration delivers measurable return versus where it introduces unmanaged cost. The data helps our technical leaders:

- Structure total cost of ownership (TCO) models that account for hidden costs like technical debt and maintenance.

- Evaluate AI tool and platform vendor claims against independent market data.

- Define realistic budgets and timelines for AI pilots and production systems.

- Justify buy-first strategies where the data shows clear economic advantages.

Major forecasts show AI spending growth is driven heavily by infrastructure and services, but leading analysts emphasize that outcomes are constrained as much by human capital and organizational processes as by model capability.

With 100% U.S.-based senior consultants averaging 17+ years of experience, we help organizations distinguish genuine technology shifts from temporary hype cycles and architect systems that can adopt these trends maturely without requiring complete platform rewrites.

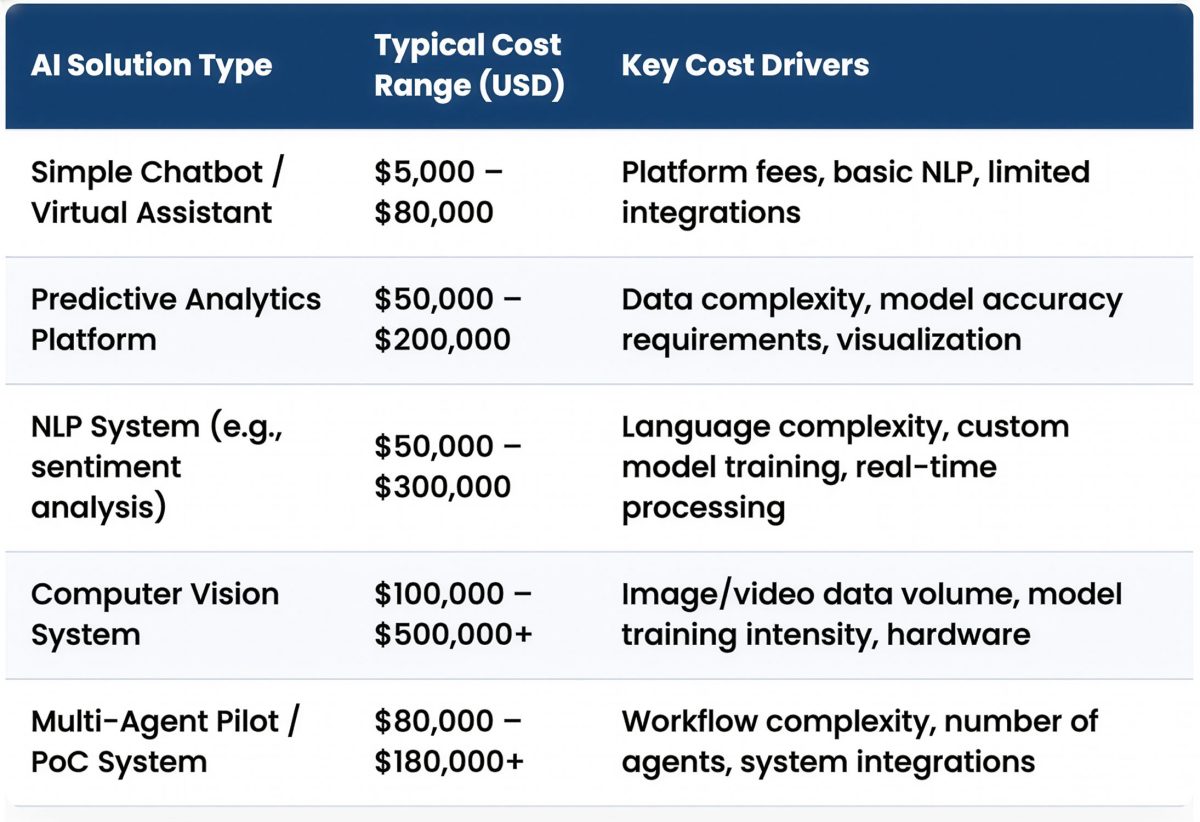

How Much Does AI Software Development Cost?

Estimating the cost of an AI project requires a granular look at its various components. The initial development cost of an AI solution varies significantly based on its complexity, the sophistication of the models involved, and the volume and quality of data required.

Typical Cost Ranges by AI Solution Type

Simple, proof-of-concept projects can start in the tens of thousands, while complex, enterprise-grade systems can run into the millions.

| AI Software Development Cost Ranges by Solution Type | Typical Cost Range (USD) | Key Cost Drivers |

| Simple Chatbot / Virtual Assistant | $5,000 – $80,000 | Platform fees, basic NLP, limited integrations |

| Predictive Analytics Platform | $50,000 – $200,000 | Data complexity, model accuracy requirements, visualization |

| NLP System (e.g., sentiment analysis) | $50,000 – $300,000 | Language complexity, custom model training, real-time processing |

| Computer Vision System | $100,000 – $500,000+ | Image/video data volume, model training intensity, hardware |

| Multi-Agent Pilot / PoC System | $80,000 – $180,000+ | Workflow complexity, number of agents, system integrations |

Methodology Note: Cost ranges were derived from publicly available 2025–2026 pricing estimates published by several software development consultancies and industry reports. [4][5][6][7][8] The ranges represent typical project scopes for mid-market and enterprise organizations.

Caveat: These ranges represent estimates and reflect typical project scopes. Actual costs can vary substantially based on data readiness, regulatory requirements, the specific vendor or team engaged, and the level of required customization.

What This Means: Interpreting AI Development Cost Ranges

- A wide cost spectrum exists across AI solution types. Even a “simple” chatbot can cost up to $80,000 when enterprise-grade security, compliance, and integrations are required, while computer vision and multi-agent systems regularly exceed six figures.

- The primary cost drivers are not just the algorithms themselves but the surrounding components: data complexity, integration requirements, and the need for real-time processing capabilities.

- AI-assisted development is beginning to influence these cost ranges. Architect-led teams using modern AI coding tools and automated testing pipelines can often deliver complex systems significantly faster than traditional development approaches.

- The cost ranges show that there is no one-size-fits-all price for AI. Each project must be scoped based on its specific functional and non-functional requirements.

In Practice: Scoping AI Projects and Budget Expectations

We use these cost ranges to help clients establish initial budget expectations. This data provides a realistic baseline for discussions about project scope and complexity.

In our experience, the final cost is most influenced by the quality of the existing data and the complexity of integrating the AI solution into legacy systems. We advise clients to view these ranges as a starting point, a detailed discovery phase is always necessary to produce a precise estimate.

For a global automotive insurance technology client, Keyhole architected and developed an AI-powered claims automation platform[17] that analyzes accident photos, detects vehicle damage, and generates instant repair estimates. The system involved React, Java/Spring Boot microservices on Google Cloud, and Python-based AI models for image recognition, with up to 50% of claims now automatically approved through the application’s AI system.

- The initial scoping conversation started with cost ranges similar to the computer vision row above.

- After discovery, the actual scope expanded significantly due to the need for multi-country, multi-provider integration, real-time AI inference pipelines, and compliance across multiple regulatory environments.

The cost table gets the conversation started; the discovery phase gets the budget right.

The Hidden Bill: Total Cost of Ownership for AI

For many organizations, the largest costs of AI systems emerge after the initial deployment. The AI total cost of ownership (TCO) is shaped less by model training expenses and more by the operational lifecycle that follows: maintenance, data management, integration work, and compliance obligations that accumulate over time.

Survey data supports this pattern. A 2025 survey reported by CIO.com found that a majority of organizations misestimate AI costs by more than 10%, with nearly a quarter underestimating costs by 50% or more.[9]

These overruns rarely originate from model costs alone; they typically emerge from indirect operational expenses that become visible only after systems move into production.

Major Drivers of AI Total Cost of Ownership

The following cost categories represent the most common drivers of AI total cost of ownership across enterprise delivery environments.

| Hidden Cost Category | Financial Impact | Primary Driver | Keyhole Observation |

| Post-Deployment Lifecycle Work (Maintenance + Enhancement + Support) | Often the majority of lifecycle cost; some estimates place it near ~65%[4][11] | Enhancements, adaptation, regression testing, platform changes, compliance, incident response | Largest component of TCO; Underestimated because it’s distributed across many teams and budgets |

| Data Preparation & Cleaning | Often dominates early development effort; some estimates place data preparation near 60-80% of project effort[9][11] | Poor data quality, lack of governance | Most underestimated phase |

| Annual Maintenance | 15-25% of initial build cost[4] | Model drift, updates, bug fixes | Recurring operational expense |

| Compliance & Security (Regulated) | $10k – $100k annually[7][12] | GDPR, HIPAA, industry mandates | Non-negotiable cost in many sectors |

Methodology Note: Ai software development cost data points were synthesized from surveys and industry reports published between October 2025 and January 2026. Lifecycle and maintenance observations are drawn from software engineering research and enterprise delivery experience. Percentages represent directional benchmarks and vary widely depending on architecture quality, regulatory environment, and operational maturity.

Caveat: Hidden cost percentages are averages and directional estimates. Organizations with mature data infrastructure and automation pipelines may experience lower lifecycle costs, while those in heavily regulated sectors (healthcare, finance) will see compliance costs at the higher end of the range.

What This Means: Implications for AI Project Planning

These AI software development costs often exceed initial estimates once post-deployment work is factored in.

Over a 3 to 5 year horizon, post-deployment lifecycle work such as maintenance, enhancements, compliance, regression testing, platform upgrades, and operational support often becomes the dominant portion of total spend. This is particularly true when delivery fundamentals like automation, modularity, and observability are weak.

Some studies estimate that this lifecycle work can account for up to roughly two-thirds of total AI system cost, although the exact percentage varies significantly depending on architecture quality and delivery discipline. Systems built with strong automation, modular design, and CI/CD pipelines tend to experience lower long-term maintenance overhead than those built without those foundations.

Data preparation is another major cost driver in AI initiatives. Industry surveys and practitioner reports consistently show that data preparation and ongoing data management consume a substantial share of AI development effort, though exact percentages vary by organization, data maturity, and project scope. One widely cited industry survey found that data scientists spend 60% of their time cleaning and organizing data and another 19% collecting datasets before modeling can begin.[25]

The practical takeaway is consistent: data readiness is usually the gating factor for AI scope, timeline, and cost.

Maintenance should also be treated as a recurring operational budget rather than a one-time expense. A common enterprise planning heuristic is to reserve roughly 15–25% of the initial build cost annually for maintenance and support, though actual costs depend heavily on system complexity, regulatory obligations, and how rapidly the product evolves. By this metric, a project with an initial build cost of $200,000 will require an additional $30,000 to $50,000 every year to operate effectively.

Organizations adopting AI-accelerated development practices are increasingly mitigating these risks by automating portions of the development lifecycle, improving code quality earlier, and reducing long-term technical debt through automated testing and architecture validation.

In Practice: How These Costs Appear in Real AI Projects

We encourage clients to think beyond the initial build budget and plan for ongoing lifecycle costs from the start. In our experience, total spend over the first three years often lands at two to three times the initial development cost once maintenance, enhancements, compliance, and operational support are included. When that reality is surfaced early in planning, it reduces the mid‑stream budget shocks that derail otherwise successful AI initiatives.

For a St. Louis healthcare technology provider, Keyhole led a data platform modernization on AWS[18] that included streaming integration, .NET services, and Angular front-end components. The compliance and data governance requirements drove a meaningful portion of the total engagement cost, but investing in that architecture upfront positioned the client to add new data-driven and AI capabilities without rebuilding the platform foundation.

In our experience, healthcare and financial services clients who treat compliance as a first-class architectural concern consistently spend less on remediation in Year 2 and beyond.

Technical debt is another major contributor to total cost of ownership. In a recent AI-accelerated COBOL modernization[19] for a wholesale food distribution client, Keyhole used its EnterpriseGPT generative AI tool to assist migration from COBOL to Spring Batch, reducing manual effort by approximately 20–30%. A key driver of that efficiency gain was investing in clean architecture and CI/CD pipelines from the start. Those foundations made future changes cheaper and safer, instead of pushing hidden work into “later.”

Teams that skip this upfront work often discover in Year 2 that maintenance and fixes cost more than whatever they saved in Year 1.

We increasingly see similar productivity gains across modernization projects where AI coding tools are used within architect-led workflows, allowing small senior teams to deliver complex system replacements significantly faster than traditional development timelines.

Understanding AI development cost requires understanding both what organizations spend and how modern engineering practices influence delivery speed and long-term total cost of ownership.

AI-Accelerated Delivery Changes the Economics of Software Development

In 2026, the cost question is inseparable from the delivery question. Many organizations can purchase AI capabilities, but the real challenge is integrating those capabilities into production workflows with the right architecture, testing, security controls, and cost observability.

AI-accelerated delivery is not simply faster coding—it’s disciplined engineering that cuts TCO. It is the combination of AI tooling with disciplined engineering systems that translate productivity gains into shorter cycle times and lower three-to-five-year total cost of ownership.

The cost of developing AI software is a multi-faceted issue involving talent, infrastructure, data readiness, and significant hidden expenses that emerge after initial deployment. Decision-makers are now asking how to sequence investments, how to evaluate build-versus-buy tradeoffs, and how to implement cost governance without introducing unnecessary friction for development teams.

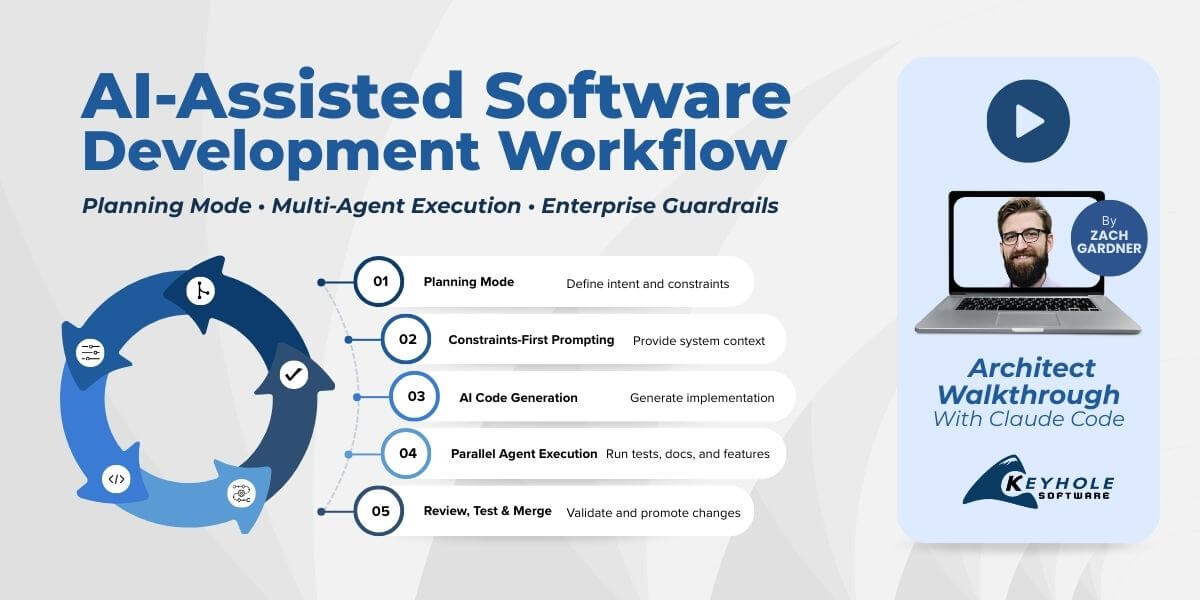

A critical shift occurring in enterprise software delivery is the use of AI-accelerated development workflows. Architect-led senior teams are increasingly using AI coding tools, agentic development workflows, and automated testing pipelines to compress delivery timelines while maintaining quality and governance.

When implemented within disciplined engineering workflows, AI tooling can improve developer productivity and reduce certain development timelines. However, recent DORA research underscores that AI adoption is not simply a tools problem; it is a systems and organizational problem. These gains only translate into faster delivery when organizations maintain strong engineering fundamentals such as testing automation, CI/CD maturity, and architectural discipline.

Research on AI Developer Productivity and Delivery Performance

Research also shows that AI coding assistants can significantly improve developer productivity on well-defined tasks, but the broader impact on software delivery depends heavily on engineering discipline and workflow integration.

Controlled experiments have shown that developers using AI coding assistants completed programming tasks up to 55% faster than those without assistance.[22] At the same time, industry delivery research indicates that AI adoption does not automatically improve delivery performance unless teams maintain strong practices such as small batch sizes, automated testing, and mature CI/CD pipelines.

Several recent studies help quantify how AI tools are affecting engineering productivity and delivery timelines.

| AI Delivery Research Finding | Metric | Implication for Delivery |

| Developers using AI coding assistants completed programming tasks faster in controlled studies | Up to 55% faster task completion[22] | AI improves engineering productivity on well-scoped development tasks |

| Developers now regularly use AI tools in daily work | 75%+ of developers report using AI for at least one daily responsibility[23] | AI tooling is rapidly becoming standard within engineering workflows |

| Teams adopting AI report measurable productivity improvements | More than one-third report moderate to extreme productivity gains[23] | AI tools can accelerate development when integrated into existing delivery processes |

| Organizations successfully scaling AI pilots move faster to production | ~90 days from pilot to full implementation[13] | Workflow integration and delivery discipline drive AI ROI |

| Enterprises struggling to scale AI pilots report much longer timelines | 9+ months from pilot to production[13] | Weak delivery systems slow AI adoption and inflate costs |

| Analysts expect significant cancellation rates for poorly governed agentic AI projects | 40%+ projected cancellation by 2027[24] | Agentic AI requires governance and cost controls |

What This Means: AI Productivity vs Delivery System Maturity

The research suggests that AI tools change the economics of software delivery, but not in the simplistic way many organizations expect.

- First, AI improves task-level productivity, especially in areas like code generation, documentation, and automated test creation. These improvements allow experienced engineers to move faster through routine implementation work.

- Second, productivity gains alone do not guarantee faster product delivery. Industry delivery research shows that teams adopting AI without strong engineering practices can experience lower stability and throughput, particularly when generated code increases complexity or introduces gaps in testing. We increasingly see teams arrive after “AI-assisted” builds that don’t function as intended: codebases assembled quickly with AI tools but lacking coherent architecture, test coverage, and operational readiness.

- Third, organizations that successfully scale AI initiatives focus less on the tools themselves and more on delivery readiness. Research benchmarking shows that high-performing organizations move from pilot to production in approximately 90 days, while many enterprises require nine months or longer to achieve the same transition.

AI adoption also introduces new cost dynamics. Because many AI services operate on usage-based pricing models, charging per token, inference request, or API call, the financial impact of AI systems increasingly depends on how they are architected, monitored, and scaled in production environments. Industry guidance increasingly recommends that organizations treat AI cost observability as a core engineering capability, monitoring metrics such as cost per inference and token usage alongside traditional delivery performance indicators.[5]

The latest DORA research on AI-assisted development emphasizes that AI primarily amplifies an organization’s existing delivery capabilities. Teams with strong baselines in automated testing, CI/CD, and change management see AI improvements reflected in throughput metrics like deployment frequency and lead time for changes, while teams lacking those capabilities often experience increased instability despite faster coding.[26]

The difference is rarely the model itself. The difference is the delivery system around it.

In Practice: Architect Led AI Accelerated Delivery

In real delivery environments, AI-accelerated development reduces cost and timeline risk when organizations combine AI tooling with disciplined engineering practices. Successful teams typically implement key controls from the start.

- Architecture-first delivery: Production architecture is defined before implementation begins, including service boundaries, data flows, security constraints, and operational monitoring. This ensures that development work moves directly toward a production-ready system rather than a proof-of-concept prototype.

- Automation-first quality: Automated testing, linting, CI policies, and structured code review become mandatory guardrails. AI-generated code increases velocity, but automation ensures that increased speed does not introduce hidden technical debt.

- Delivery performance metrics: High-performing teams measure delivery performance using metrics such as cycle time, deployment frequency, and change failure rate alongside AI productivity metrics.

In some engagements, we’re brought in after an AI-built or rapidly assembled prototype or “vibe-coded” application fails to meet reliability or compliance requirements. Our work starts by re-establishing architecture, test baselines, and deployment patterns so the system can be safely taken to production.

In a recent AI-accelerated platform modernization[20] engagement for a Kansas City insurance provider, architect-led teams compressed an estimated 18–24 month effort into roughly five months of delivery—cutting the AI software development cost by more than 75%. Our approach treated AI as an acceleration layer within architect-led delivery systems, not as a replacement for sound engineering practices. But at the end of the engagement the AI total cost of ownership was significantly less than budgeted.

In Practice: Managing AI Usage Costs

Because AI software development costs often include usage-based costing models, engineering teams increasingly manage cost alongside reliability and performance.

Typical operational controls include:

- monitoring cost per inference and token usage trends

- detecting anomalies in request volume or token consumption

- establishing usage thresholds and budget guardrails

- measuring cost relative to delivered business value

Common AI usage cost drivers include:

- cost per inference request for hosted models

- token-based pricing for large language models

- vector database storage and retrieval operations used in RAG systems

- third-party API calls triggered inside agentic workflows

- per-seat licensing for AI development tools and copilots

These costs can appear small at the individual request level but scale rapidly as systems move from pilot environments to production workloads. A prototype that processes a few hundred prompts per day may generate minimal cost, while the same system serving thousands of users or automated workflows can introduce substantial recurring expenses.

For this reason, many organizations now treat AI usage metrics such as cost per inference, cost per token, and request volume growth as core operational indicators alongside traditional system metrics like latency, throughput, and error rates.

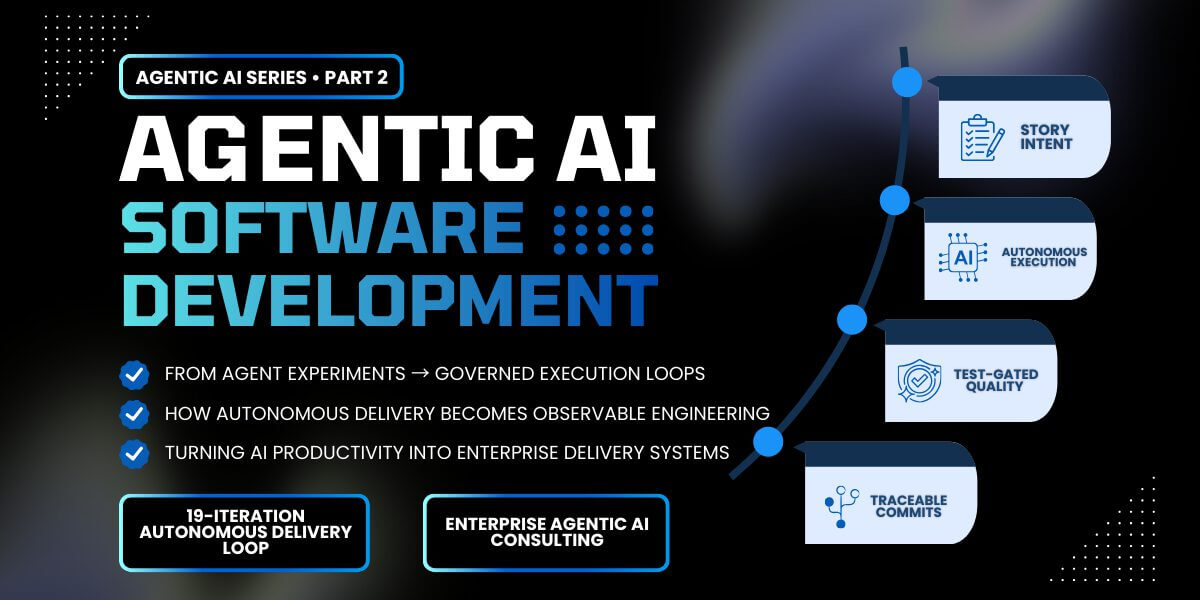

Where Agentic AI Fits in the Delivery Model

Agentic AI systems are an emerging architectural pattern where AI agents can plan and execute multi-step workflows across tools, APIs, and enterprise services. When applied to clearly defined workflows, these systems can compress delivery timelines and automate multi-step development and operational tasks.

But analysts also warn that many agentic initiatives are misapplied or “agent-washed.” Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls.[24]

Additionally, agentic architectures introduce new operational considerations. Because these systems often rely on consumption-based services such as API calls, token usage, and inference requests, they can create unpredictable costs if usage is not carefully monitored. Industry analysts have also noted that many so-called agentic solutions are simply rebranded assistants without true autonomy or orchestration capabilities.

What This Means: When Agentic AI Actually Creates Value

Agentic AI can meaningfully accelerate software delivery when applied to clearly scoped workflows with well defined success criteria. The technology is most effective when organizations treat it as an extension of disciplined engineering systems rather than as a replacement for them.

Because agentic systems can trigger downstream actions across multiple services, poorly governed implementations can create unpredictable behavior, cost growth, or operational risk.

In Practice: Where Agentic AI Fits in Enterprise Software Delivery

Agentic AI represents one of the newest extensions of AI-accelerated software delivery models. While the broader shift toward AI-assisted development is already changing the economics of software delivery, agentic systems introduce the possibility of coordinating multi-step workflows across tools and services.

Many organizations are beginning to introduce agentic capabilities gradually by using agentic AI development tools within existing software delivery workflows. In this model, AI agents can assist with tasks such as planning implementation steps, generating code, or coordinating development activities across tools while engineers remain in the loop to review and validate outputs.

This supervised approach allows teams to gain productivity benefits from agentic tooling without disrupting the existing software development lifecycle. Development work can move faster, but architectural governance, testing standards, and deployment controls remain intact. In environments with strong architecture, automated testing, and mature CI/CD practices, these approaches can allow small senior teams to deliver production-ready systems significantly faster than traditional development models.

As teams adopt these workflows, they often discover that AI-assisted delivery can accelerate development while still reinforcing disciplined engineering practices. Rather than replacing engineers, these tools amplify the productivity of experienced teams and allow organizations to introduce AI capabilities incrementally into existing delivery systems with lower operational risk.

AI Development Trends: Spending, Build vs. Buy, and ROI

The economics of AI development are now being shaped not only by technology capability, but by how organizations integrate AI into their software delivery systems. Organizations are investing heavily in both AI infrastructure and software platforms while simultaneously experimenting with delivery models that can move AI initiatives from pilot to production faster.

Enterprise spending on artificial intelligence continues to accelerate as organizations scale generative AI, machine learning, and agentic systems into production environments. The market has moved beyond isolated experiments and into a phase of scaled investment across software, hardware, and services.

This shift is reflected in IT budgets. A 2025 survey of 100 enterprise CIOs by Andreessen Horowitz (a16z) found that enterprise leaders expect LLM and generative AI budgets to grow by roughly ~75% over the next year[3]

Enterprise AI Spending and Budget Growth

| AI Trend Metric | Value | Primary Driver | Keyhole Observation |

| Global AI Spending (2026 Forecast) | $2.52 Trillion[1] | Enterprise adoption, service integration | Market entering early maturity |

| AI Use Cases Purchased Vs. Built Internally (2025) | 24% Build / 76% Buy[2] | High TCO of custom builds, mature SaaS market | Pragmatic shift to buying |

| Task-specific GenAI Tools Not Reaching “Successful Implementation” | ~95%[13] | Lack of production readiness, poor workflow integration | Most pilots are not designed for scale |

| Orgs Reporting Enterprise EBIT Impact | 39%[15] | Successful scaled deployments, workflow redesign | True ROI requires business transformation |

Methodology Note: Data points were calculated based on survey responses and market analysis from the cited research firms between October 2025 and February 2026. Enterprise AI total cost and spending figures represent averages across surveyed organizations (mid-market to Fortune 500) and may not reflect all company sizes or industries.

Caveat: Market forecasts are subject to revision based on economic conditions and technology adoption rates. The ROI and failure rate data reflects different survey methodologies and definitions. The MIT pilot failure figure in particular has been debated by analysts who note limitations in the study’s sample and definition of “failure.” We cite it here as a directional indicator of the difficulty of scaling AI pilots, not as a precise universal benchmark.

What This Means: How Enterprises Are Sourcing AI Capabilities

- The projected $2.52 trillion market size for 2026 indicates that AI is no longer a niche technology but a foundational component of the global economy. The growth is driven by services and software, not just hardware, showing a maturation of the ecosystem.

- The enterprise market has shifted toward buying foundational AI capabilities while reserving custom development for systems that differentiate the business. In practice, many organizations combine these approaches, purchasing commodity AI services while building custom software that integrates those capabilities into their core platforms and workflows. The 76% buy rate reflects a pragmatic response to the high failure rates, cost overruns, and extended timelines associated with custom AI development.

- The path from a successful pilot to a scaled, profitable enterprise solution is the primary failure point for AI initiatives. Whether the precise number is 95% or somewhat lower, the directional message is consistent: most pilots stall before reaching production.

- However, analysts also warn that the rapid growth of agent-based AI has introduced significant hype into the market. Many early implementations are rebranded assistants or loosely coordinated tools rather than fully autonomous systems, which contributes to unrealistic expectations and increases the likelihood that early AI pilots fail to reach production scale.

- In practice, agentic systems change the cost structure: consumption (tokens/requests), monitoring, safety controls, and ongoing tuning become first-class drivers of AI software development costs.

- This metric reflects how enterprises source AI use cases (purchase vs. build), not a full allocation of total AI spending across all categories (infrastructure, services, software). The same research also reports that high-performing organizations move from pilot to full implementation in roughly 90 days, while many enterprises take 9+ months or longer.

In Practice: Moving AI Initiatives from Pilot to Production

We see organizations using these AI spending benchmarks to validate their own budget allocations. If a company’s planned AI investment growth is significantly lower than the 75% average, it prompts a strategic discussion about whether the organization is investing enough to keep pace with competitors.

We advise organizations to focus first on where AI capabilities create real competitive advantage. Many enterprises can purchase generic AI tools, but integrating those capabilities into production systems often requires custom software, platform modernization, and disciplined engineering practices.

In practice, we most often see AI initiatives succeed when organizations treat AI as an extension of their existing platforms rather than as a standalone product. Architect-led teams can use AI-accelerated development workflows to integrate new capabilities directly into enterprise systems, allowing organizations to move from experimentation to production faster while maintaining governance and operational stability.

We help clients design AI initiatives that have a clear path to production from day one. For example, in a recent platform modernization[20] for a trusted Kansas City insurer, Keyhole used AI-accelerated workflows to replace the entire platform—UI, services, database, and administrative tooling—in roughly five months rather than the estimated 18–24 months without AI tooling. The engagement succeeded (and had lower total AI software development costs) because the production architecture, success metrics, data pipeline, and integration points with existing systems were defined before the first sprint began. This difference, integrating an early-defined production architecture with delivery discipline in place, is often what separates pilots that scale from proof-of-concepts that stall.

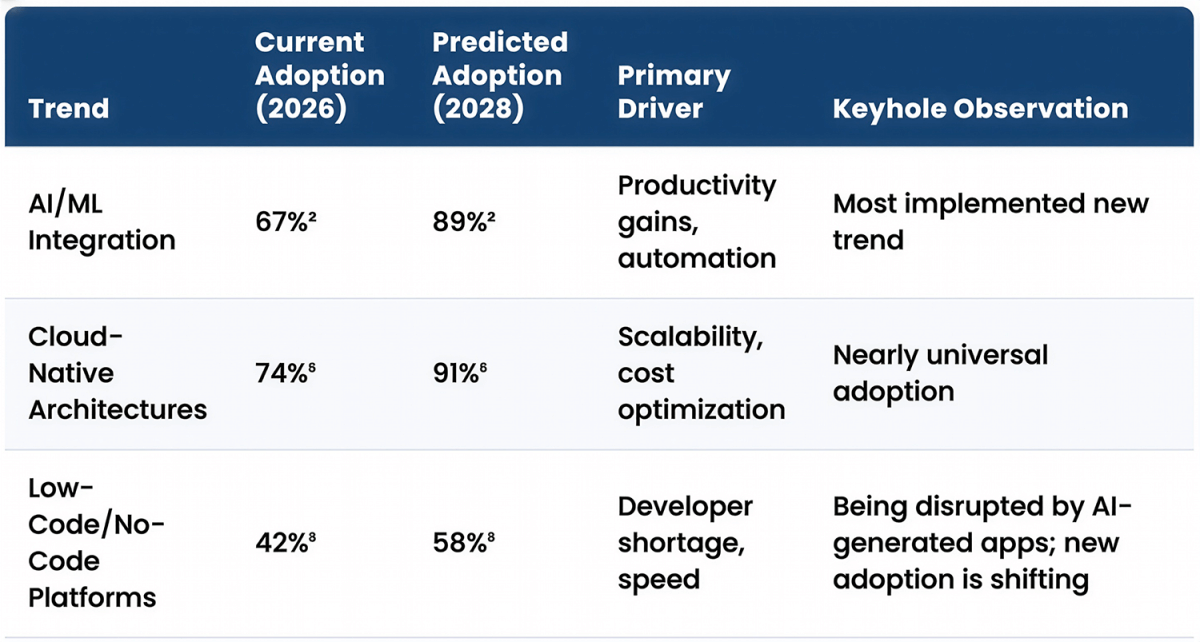

AI Cost Predictions for 2027-2028

Based on our analysis of current adoption rates and enterprise feedback, Keyhole predicts the following trends will shape AI development costs over the next two years:

- TCO Becomes the Primary Metric. Enterprise AI budgeting will increasingly require multi-year AI total cost of ownership modeling rather than simple upfront build estimates. We predict that by 2028, over 80% of enterprise AI budget approvals will require a multi-year AI TCO model that includes maintenance, data pipelines, and infrastructure costs.

- FinOps for AI Becomes Standard Practice. As AI spending becomes a more significant portion of IT budgets, CFOs will demand greater AI cost control and predictability. We predict that dedicated FinOps for AI teams, responsible for monitoring and optimizing AI-related spending, will be established in over 60% of Fortune 500 companies by 2028.

- Hybrid AI Architectures Become the Enterprise Standard. Rather than choosing strictly between buying or building AI systems, most organizations will adopt hybrid architectures. Commodity AI capabilities such as foundation models, embeddings, and developer tools will increasingly be purchased from specialized providers, while custom software will be built to integrate those capabilities into core business platforms and workflows. By 2028, the majority of enterprise AI initiatives will combine purchased AI services with custom-built application layers that deliver domain-specific business value.

- Architect-Led AI Delivery Becomes a Competitive Advantage. As AI tools accelerate development velocity, organizations will increasingly differentiate themselves not by how quickly they generate code, but by how effectively they govern architecture, testing, and production deployment. Teams that combine AI-assisted development with strong engineering discipline will consistently move AI initiatives from pilot to production faster than organizations relying on tooling alone.

How Keyhole Software Helps Organizations Navigate AI Costs

With 100% U.S.-based senior consultants averaging 17+ years of experience, Keyhole brings architect-led delivery discipline to AI initiatives that might otherwise stall between pilot and production.

For example, in a recent enterprise generative AI engagement, Keyhole designed and implemented a secure Retrieval-Augmented Generation (RAG) architecture that allowed internal teams to query complex enterprise datasets using natural language while maintaining strict governance, auditability, and cost visibility.[21] The proof-of-concept validated a scalable architectural pattern for responsible AI adoption, giving the client confidence to expand generative AI capabilities beyond experimentation.

Rather than treating AI as a standalone technology initiative, we integrate AI coding tools, automated testing workflows, and agentic development patterns directly into the software delivery lifecycle so organizations can move AI capabilities from pilot to production without disrupting existing systems. This approach allows senior engineering teams to deliver complex enterprise systems faster while maintaining strong architectural governance. Our team has deep expertise in the trends shaping the future of software development, including:

- AI/ML and RAG Implementations: We help clients build custom AI solutions, including RAG systems[21] that enable large language models to access enterprise data without sending sensitive information to external APIs. This is critical for regulated industries with data privacy requirements. We structure AI as an acceleration layer within architect-led teams rather than a replacement for human judgment.

- Legacy & Cloud-Native Modernization: We help organizations modernize legacy platforms and migrate systems to cloud-native architectures on AWS and Azure. Our teams frequently apply AI-assisted development to accelerate legacy modernization initiatives, including large-scale refactoring and COBOL modernization efforts, while maintaining production stability. Architect-led modernization ensures that new platforms support future AI capabilities without requiring another full rebuild.

- AI System Governance and Observability: AI systems introduce new operational risks including data exposure, unpredictable outputs, and usage-based cost growth. Keyhole implements governance patterns such as access controls, auditability, monitoring, and evaluation pipelines so AI capabilities can be safely deployed into production environments.

Keyhole also works with organizations implementing AI-accelerated software development workflows, where architect-led teams use AI coding tools and automation to compress delivery timelines and thereby reduce software development costs.

Requesting More Information on AI Software Development Costs

If you’re evaluating AI software development costs, the most important question is not simply how much AI costs, but how AI changes the economics of software delivery.

Keyhole helps organizations define scope, model true AI software development cost of ownership, and structure delivery approaches that hold up once systems meet real data, real users, and real operational constraints.

If you would like to discuss how these AI software development cost trends apply to your organization’s technology roadmap, or learn more about Keyhole’s custom software development services, contact our team.

References

- Gartner, “Gartner Says Worldwide AI Spending Will Total $2.52 Trillion in 2026,” January 15, 2026. https://www.gartner.com/en/newsroom/press-releases/2026-1-15-gartner-says-worldwide-ai-spending-will-total-2-point-5-trillion-dollars-in-2026

- Menlo Ventures, “2025: The State of Generative AI in the Enterprise,” December 9, 2025. https://menlovc.com/perspective/2025-the-state-of-generative-ai-in-the-enterprise/

- Andreessen Horowitz (a16z), “How 100 Enterprise CIOs Are Building and Buying Gen AI in 2025,” June 10, 2025. https://a16z.com/ai-enterprise-2025/

- Coherent Solutions, “AI Development Cost Estimation, Pricing Structure & ROI,” 2025. https://www.coherentsolutions.com/insights/ai-development-cost-estimation-pricing-structure-roi

- SumatoSoft, “A Complete Breakdown of AI Software Development Cost in 2025,” December 2025. https://www.linkedin.com/pulse/complete-breakdown-ai-development-cost-2025-sumatosoft-ctxhf

- Crescendo AI, “How Much Do AI Chatbots Cost? Estimates for 2026,” January 20, 2026. https://www.crescendo.ai/blog/how-much-do-chatbots-cost

- ITRex, “Assessing the Cost of Implementing AI in Healthcare,” June 18, 2025. https://itrexgroup.com/blog/assessing-the-costs-of-implementing-ai-in-healthcare/

- Cleveroad, “AI Agent Development Cost and Process,” 2025. https://www.cleveroad.com/blog/ai-agent-development/

- CIO.com (Grant Gross), “AI cost overruns are adding up, with major implications for CIOs,” October 2, 2025. https://www.cio.com/article/4064319/ai-cost-overruns-are-adding-up-with-major-implications-for-cios.html

- Levels.fyi, “Machine Learning Engineer Salary,” 2026. https://www.levels.fyi/t/machine-learning-engineer

- Xicom, “How Much Does It Cost to Develop an AI Application in 2026?” 2026. https://www.xicom.biz/blog/how-much-does-it-cost-to-develop-an-ai-application/

- Aalpha.net, “Cost of Implementing AI in Healthcare,” 2025. https://www.aalpha.net/blog/cost-of-implementing-ai-in-healthcare/

- MIT NANDA Initiative, “The GenAI Divide: State of AI in Business 2025,” August 2025, as reported by Fortune. https://fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo/

- Deloitte, “AI and tech investment ROI,” October 16, 2025. https://www.deloitte.com/us/en/insights/topics/digital-transformation/ai-tech-investment-roi.html

- McKinsey & Company, “The State of AI: Global Survey 2025,” November 5, 2025. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- Zylo, “2026 SaaS Management Index: The Rise of AI-Native Apps,” February 2026. https://zylo.com/blog/ai-cost/

- Keyhole Software, “Auto Damage Detection App with Java, React, and AI,” Project Case Study. https://keyholesoftware.com/projects/auto-damage-detection-app-with-java-react-and-ai/

- Keyhole Software, “St. Louis Healthcare AWS Modernization,” Project Case Study. https://keyholesoftware.com/projects/st-louis-healthcare-aws-modernization/

- Keyhole Software, “AI-Accelerated COBOL Modernization to Spring Batch,” Project Case Study. https://keyholesoftware.com/projects/ai-accelerated-cobol-modernization-to-spring-batch/

- Keyhole Software, “Kansas City Insurance Platform Modernization (AI-Assisted),” Project Case Study. https://keyholesoftware.com/projects/kansas-city-insurance-platform-modernization-ai-assisted/

- Keyhole Software, “Enterprise Generative AI POC,” Project Case Study. https://keyholesoftware.com/projects/enterprise-generative-ai-poc/

- Peng, Sida, et al., “The Impact of AI on Developer Productivity: Evidence from GitHub Copilot,” arXiv, 2023. https://arxiv.org/abs/2302.06590

- Google Cloud, “DORA 2024 Accelerate State of DevOps Report,” 2024. https://cloud.google.com/devops/state-of-devops

- Reuters, “Over 40% of agentic AI projects will be scrapped by 2027, Gartner says.” https://www.reuters.com/business/over-40-agentic-ai-projects-will-be-scrapped-by-2027-gartner-says-2025-06-25

- Anaconda. State of Data Science 2020: The AI & Machine Learning Report. https://www.anaconda.com/state-of-data-science

- InfoQ. AI Is Amplifying Software Engineering Performance, Says the 2025 DORA Report. https://www.infoq.com/news/2026/03/ai-dora-report/

Last updated: March 18, 2026

More From Keyhole Software

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.