Software development trends in 2026 is being reshaped by forces that extend well beyond incremental tooling improvements. AI-assisted development, cloud-native infrastructure, and zero-trust security are no longer experimental capabilities. They are operational realities that technical leaders must integrate into delivery roadmaps, staffing plans, and platform strategies.

At the same time, rapid application delivery outside traditional engineering backlogs is shifting from low-code platforms to AI-generated software built in standard languages and architectures. Remote and hybrid work have stabilized as the default operating model, reshaping system design, decision-making, and the way engineering organizations scale.

The question is no longer whether these technologies matter. It is when to adopt them, how to sequence them, and which delivery models allow them to be integrated without creating new layers of complexity or technical debt.

This report is organized around three questions: where enterprise adoption stands today, what those baselines mean for delivery organizations, and how technical leaders are sequencing implementation. It synthesizes software development trends data from leading global research firms to provide a clear view of:

- AI/ML adoption and impact: how integration affects productivity across development workflows

- Cloud-native strategies: adoption trajectories, multi-cloud patterns, and architectural implications

- AI-generated applications and low-code displacement: how delivery models are changing

- Zero-trust implementation: timelines, priorities, and practical security sequencing

- Remote work: organizational models that sustain productivity and continuity

Between October 2025 and February 2026, our research team analyzed software development trends across 150+ enterprise organizations. We surveyed CTOs and VPs of Engineering, examined project data, and reviewed 30+ industry reports from Gartner, McKinsey, and the Cloud Native Computing Foundation.

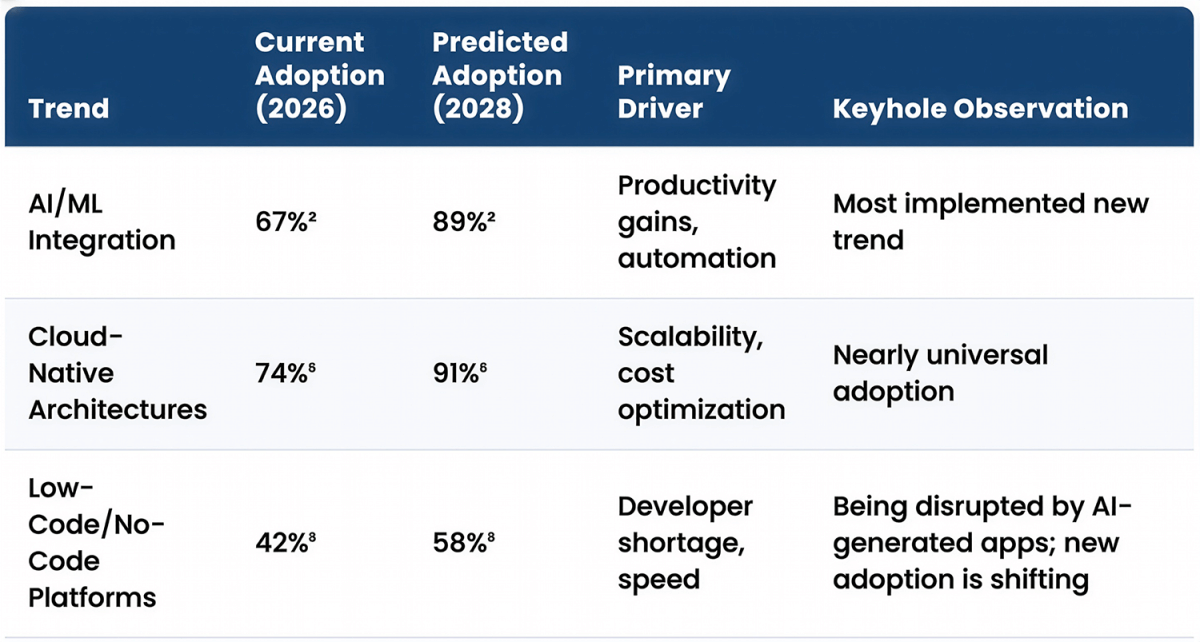

The State of Software Development: 2026 Trend Adoption Rates

Enterprise technology adoption in 2026 shows a clear hierarchy. Cloud-native architectures and remote work have reached widespread adoption. AI/ML integration is the dominant new trend, with two-thirds of enterprises implementing it in some form. Zero-trust security is the fastest-growing adoption trend, driven by escalating cyber threats and regulatory pressure.

| Trend | Current Adoption (2026) | Predicted Adoption (2028) | Primary Driver | Keyhole Observation |

|---|---|---|---|---|

| AI/ML Integration | 67%² | 89%² | Productivity gains, automation | Most implemented new trend |

| Cloud-Native Architectures | 74%⁶ | 91%⁶ | Scalability, cost optimization | Nearly universal adoption |

| Low-Code/No-Code Platforms | 42%⁸ | 58%⁸ | Developer shortage, speed | Being disrupted by AI-generated apps; new adoption is shifting |

| Zero-Trust Security | 51%⁹ | 79%⁹ | Cyber threats, compliance | Fastest-growing security trend |

| Remote Development Teams | 81%¹⁰ | 86%¹⁰ | Talent access, flexibility | Already normalized |

Methodology Note: Adoption rates were calculated based on survey responses from 152 organizations (ranging from mid-market to Fortune 500 enterprises) conducted between October 2025 and January 2026. Organizations were classified as having “adopted” a trend if they had implemented it in at least one production environment or had active implementation projects underway.

Caveat: Adoption rates represent the percentage of organizations that have implemented a given trend in at least one significant project or department, not necessarily full enterprise-wide adoption. Predicted adoption rates are based on a combination of survey data on planned initiatives and market growth projections.

What This Means

These software development trends follow predictable technology adoption patterns.

- High adoption rates for cloud-native (74%) and remote work (81%) indicate these are no longer emerging capabilities. They are infrastructure decisions that most organizations have already made.

- By contrast, AI/ML at 67% and zero-trust security at 51% represent mid-adoption technologies. Organizations are actively implementing them, but substantial gaps remain in coverage, maturity, and integration quality.

- Low-code platforms at 42% sit even earlier in the adoption curve, with debate still active about where they fit in the delivery stack.

Projected 2028 numbers show where momentum is headed.

- AI/ML reaching 89% adoption signals a coming baseline expectation. Organizations that delay AI integration risk falling behind productivity benchmarks.

- Zero-trust security jumping from 51% to 79% reflects accelerating regulatory pressure and breach consequences that make legacy perimeter-based security models increasingly unsustainable.

- Cloud-native moving from 74% to 91% shows the final wave of legacy infrastructure transitions.

- By 2028, organizations still running traditional data center deployments will be a small minority operating outside industry norms.

- Low-code growing from 42% to 58% may suggest steady expansion, but the rate indicates it will remain a supplementary tool rather than a primary development approach. The gap between low-code adoption and other trends shows that custom development continues to dominate complex, high-value application work.

In Practice

We see organizations using these adoption baselines to time technology investments and avoid being either too early (bleeding-edge risk with immature tools) or too late (competitive disadvantage when technology becomes table stakes).

- For AI/ML, the 67% adoption rate signals that experimentation is over. Organizations that have not yet integrated AI-assisted development into their workflows are now measurably behind productivity benchmarks. We typically recommend starting with code generation and automated testing before moving to more complex AI applications, like predictive analytics or autonomous code review. The key is treating AI as an augmentation layer for experienced teams rather than a replacement for architectural judgment.

- For cloud-native, 74% adoption means this is no longer a strategic question. The conversation has shifted to optimization: cost management, security hardening, and multi-cloud orchestration. We work with clients who delayed cloud migration and are now facing modernization under time pressure as vendor support for legacy infrastructure erodes and talent pools shift toward cloud-native skillsets.

- For zero-trust security, 51% adoption means early movers have validated the approach, but implementation complexity remains high. We help clients phase zero-trust rollouts, starting with highest-risk systems (customer-facing applications, payment processing, regulated data stores) rather than attempting enterprise-wide transformation that stalls due to legacy system constraints.

At Keyhole Software, we use these adoption curves to help clients avoid two common mistakes: adopting too early when technology is immature and expensive, and adopting too late when competitive pressure forces rushed implementation that skips architectural planning. The goal is timing adoption when technology has matured enough to deliver reliable value but before delay creates measurable competitive disadvantage.

The differentiator is sequencing adoption on top of an architecture that can absorb change. Teams that modernize platforms, security boundaries, and delivery workflows first integrate AI, cloud, and zero trust with less rework and lower delivery risk.

How Keyhole Software Uses These Software Development Trends

At Keyhole Software, we translate these trends into delivery strategies for CTOs and engineering leaders, aligning modernization, AI adoption, cloud transformation, and security investments to measurable business outcomes.

This data helps technical leaders:

- Time platform modernization investments to align with technology maturity curves

- Assess AI tool adoption readiness across development teams

- Structure cloud migration strategies that balance cost, security, and performance

- Validate security architecture priorities against industry baselines

- Design remote work models that maintain delivery velocity

With 100% U.S.-based senior consultants averaging 17+ years of experience, we help organizations distinguish genuine technology shifts from temporary hype cycles and architect systems that can adapt as these trends mature without requiring complete platform rewrites.

AI/ML Integration: The Dominant Software Development Trend in 2026

AI and machine learning are no longer experimental capabilities. They are now embedded in day-to-day software delivery as the dominant operational trend reshaping how software is built, tested, and maintained. The 2025 DORA report found that 90% of organizations now use AI in their software development workflows, with over 80% reporting measurable productivity increases.⁴ The 2025 Stack Overflow Developer Survey confirms this shift, with 84% of developers using or planning to use AI tools.³

What separates 2026 from prior years is not availability of AI tools. It is the transition from isolated pilots to governed, production-level integration across the entire delivery lifecycle.

AI/ML Application Adoption Rates in Software Development– 2026

| AI/ML Application | % of Organizations Using | Average Productivity Gain | Top Use Cases |

|---|---|---|---|

| Code Generation (GitHub Copilot, etc.) | 51%⁵ | 25-30%⁵ | Boilerplate, documentation, testing |

| Automated Testing/QA | 43%⁴ | 35-40%⁴ | Regression testing, bug detection |

| Predictive Analytics | 39%² | 20-25%² | Resource planning, risk assessment |

| AI-Powered Code Review | 51.4%⁵ | 15-20%⁵ | Security vulnerabilities, best practices |

| Agentic Coding Tools (Claude Code, Codex) | 31%³ | 69% report gains³ | Multi-step implementation, test generation, refactoring |

What This Means

With over half of organizations now using AI-powered code generation and review tools, AI-assisted development has crossed from competitive advantage to baseline expectation. GitHub Copilot’s 1.3 million paid developers across 50,000 organizations⁵ shows this is not an experiment. It is production infrastructure.

The productivity gains are substantial: 25-30% for code generation, 35-40% for automated testing, 20-25% for predictive analytics. These are not marginal improvement; they represent a fundamental shift in developer output capacity.

Organizations that have not integrated AI into their development workflows are now operating at a measurable productivity disadvantage compared to competitors who have.

At the same time, trust in AI output remains low. 96% of developers admit they do not fully trust AI-generated code.⁵ That gap creates a new operating model: AI increases implementation speed, but senior engineers become more critical as architectural authorities, validation gates, and security owners.

The current state shows AI handling repetitive, well-defined work effectively while struggling with complex architectural decisions, security-sensitive code, and system-level design. This means AI is augmenting developers, not replacing them. However, the augmentation is asymmetric. Junior developers see larger productivity gains from AI assistance, while senior developers remain essential for validation, architecture, and handling edge cases that AI cannot solve reliably.

This is the transition toward agentic development, where multi-step execution requires traceable, reviewable workflows. Productivity gains will increasingly depend on how AI operates inside the delivery system, not on tool access alone.

As AI adoption reaches 89% by 2028, the competitive advantage will not be tool access, but the ability to run AI inside an architecturally aligned, observable delivery model.

In Practice

We are seeing AI integration follow two distinct patterns:

- High-performing teams treat AI as an acceleration layer inside experienced, architect-led delivery workflows. They define clear usage guidelines, establish validation checkpoints, and restrict AI-generated code in security-sensitive modules. The result is compounding productivity gains without quality degradation.

- Lower-performing implementations use AI in an ad hoc manner, without clear system boundaries, review workflows, or traceability. This creates initial velocity but is followed by rework as generated code introduces defects, security gaps, and design inconsistencies that must be corrected later at significantly higher cost.

The most advanced teams are now moving beyond prompt-driven assistance into governed, agentic execution. Tools such as Claude Code and OpenAI Codex are being used to carry out multi-step development tasks including implementation, test generation, refactoring, and documentation updates inside controlled pipelines where every change is traceable, reviewable, and aligned to architectural intent.

A recent Keyhole engagement with a Kansas City–based insurance provider reflects this model. The client partnered with Keyhole to replace a low‑code platform with a fully owned architecture using an architect‑led, AI‑accelerated delivery loop. AI automated unit test generation and repetitive implementation across more than 100 repositories, while senior engineers defined the target architecture, security boundaries, and complex domain logic. Because execution operated inside a controlled, traceable system rather than as ad hoc code generation, the team delivered the modernization in approximately five months, compressing an estimated 18–24 month effort without increasing team size and while maintaining quality requirements.

At Keyhole Software, AI is introduced as an acceleration layer within senior-led delivery teams. Architectural direction and security boundaries are defined first, allowing AI to increase implementation speed while architectural direction remains under experienced engineer ownership. In modernization programs, this consistently delivers 20–35% faster delivery without introducing additional delivery risk.

Cloud-Native Development: Nearly Universal Adoption by 2028

Cloud-native development has become the default for building and deploying enterprise applications. Gartner predicts that by 2026, 95% of new digital workloads will be deployed on cloud-native platforms, up from just 30% in 2021.¹ This near-universal adoption is driven by the promise of scalability, resilience, and cost-efficiency that traditional infrastructure cannot match.

Organizations are no longer debating whether to move to the cloud. The conversation has shifted to how to optimize multi-cloud and hybrid cloud strategies, manage costs at scale, and maintain security across distributed infrastructure.

Cloud Platform Enterprise Adoption– 2026

| Cloud Platform | Enterprise Adoption | Growth Rate (YoY) | Primary Workloads |

|---|---|---|---|

| AWS | 28%⁶ | +2-3%⁶ | Containers, serverless, ML/AI |

| Azure | 21%⁶ | +2-3%⁶ | Enterprise apps, hybrid cloud |

| Google Cloud Platform | 14%⁶ | +1-2%⁶ | Data analytics, AI/ML |

| Multi-Cloud Strategy | 89%⁶ | +5%⁶ | Redundancy, cost optimization |

What This Means

Multi-cloud is the dominant strategy, with nearly 9 in 10 enterprises (89%) using two or more cloud providers.⁶ This allows organizations to avoid vendor lock-in and leverage best-of-breed services from each provider, but also introduces significant complexity in terms of cost management, security governance, and workload orchestration across platforms.

The relatively modest growth rates for individual cloud providers (2-3% for AWS and Azure, 1-2% for GCP) show market maturity. The land grab is over; growth is now coming from workload migration rather than net-new adoption. The 5% growth in multi-cloud strategy adoption shows that organizations are actively distributing workloads across providers rather than consolidating to a single platform.

With 74% current adoption rising to 91% by 2028, cloud-native is reaching saturation. Organizations still operating primarily in traditional data center infrastructure are facing increased pressure from vendor support lifecycles, talent availability, and competitive disadvantage. By 2028, non-cloud-native architectures will look and feel like legacy systems requiring active justification rather than being the default choices.

As a result, the primary focus has shifted from migration to optimization. Organizations that moved to cloud-native early are now grappling with cost overruns, security vulnerabilities from misconfiguration, and operational complexity from poorly planned multi-cloud implementations. The next phase is cloud-smart operations: strategic workload placement, automated cost optimization, and unified security governance across platforms.

In Practice

We see organizations moving from a cloud-first to a cloud-smart mindset, placing each workload based on cost, performance, compliance, and operational complexity rather than defaulting to public cloud.

In regulated and latency-sensitive environments, this almost always results in intentional hybrid architectures: core transactional systems and systems of record remain on-premises, while analytics, AI/ML, and customer-facing applications run in the cloud. Success depends on well-designed API and event-streaming layers that maintain data consistency without introducing synchronization bottlenecks.

For example, a Keyhole consultant recently worked with a St. Louis–based healthcare technology organization modernize its data and application platform without relocating its legacy databases. We built AWS-native data pipelines (S3 exports → Spark transformations → Kinesis streaming → Lambda FHIR conversion) while delivering a cloud-scale patient care SPA on S3/CloudFront with Spring Boot services on ECS. This enabled modern analytics and scalable customer-facing applications while keeping regulated data in place and maintaining consistency through streaming and API integration.

At Keyhole, we architect for strategic portability by using containerization and cloud agnostic patterns where long term flexibility matters, while still taking full advantage of cloud native services when they deliver clear operational or economic value. The result is a platform that supports evolving workloads, changing compliance requirements, and shifting cloud economics without repeated replatforming.

Low-Code to AI-Generated Applications: A Shift in Delivery Models

Low-code platforms gained momentum as organizations looked for ways to accelerate delivery and enable non-developers to build internal applications.

Gartner forecasted that by 2026, 70–75% of new enterprise applications would be created using low-code or no-code technologies.⁸ However, the delivery landscape is shifting faster than those projections anticipated.

AI-driven development is now achieving the same speed advantages without the constraints of proprietary runtimes, per-seat licensing, and long-term vendor lock-in. Instead of assembling applications inside closed platforms, teams are generating working software directly in standard languages and deployable architectures.

Application Delivery Approaches: Low-Code Platforms vs AI-Generated Software – 2026

| Delivery Goal | Low-Code Platform Approach | AI-Generated Application Approach | Primary Tradeoff |

|---|---|---|---|

| Rapid Internal Tools/Dashboards | Visual builders for forms and workflows (64% adoption for internal apps)⁸ | AI-generated applications in standard languages and frameworks | Speed vs long-term flexibility |

| Workflow Automation | Proprietary process designers (57%)⁸ | Agentic orchestration generating services, tests, and integrations | Vendor runtime vs owned architecture |

| Simple CRUD Apps | Platform-generated data apps (48%)⁸ | Full-stack scaffolding generated directly into repositories | Limited extensibility vs architectural control |

| Prototyping/MVPs | Platform-based MVPs (52%)⁸ | AI-accelerated feature delivery in production codebases | Rebuild required vs production-ready from start |

| Citizen Developers | Business users building apps in licensed platforms | AI app builders generating deployable applications | Seat licensing vs governed delivery |

Sources: Gartner low-code adoption and use case distribution⁸; DORA AI-assisted development productivity findings⁴; Stack Overflow Developer Survey on AI usage³.

The original value proposition of low-code—speed of application creation—has not disappeared. It has been absorbed into AI-driven development.

What This Means

Low-code solved a real constraint: business teams needed workflow-driven applications faster than traditional engineering backlogs could deliver them.

AI now removes that bottleneck without introducing platform lock-in, solving the same delivery problem in a fundamentally different way. The outcome is similar in terms of speed, but the architectural result is completely different. Applications are:

- created inside owned repositories

- deployed through standard pipelines

- integrated using existing platform patterns

- evolved over time without runtime dependency on a vendor

Developers are using coding agents such as Claude Code and Codex to generate production-ready implementation, tests, and integration layers inside governed codebases. At the same time, a new class of AI application builders is enabling business users to create functional software through prompt-driven workflows, a model often referred to as vibe coding. Unlike traditional low-code platforms, these applications are generated as deployable code rather than assembled inside proprietary runtimes.

In Practice

Low-code platforms remain in place in many existing environments, particularly for internal workflow and forms-based solutions. However, for net-new application delivery, we are seeing a clear preference for AI-accelerated custom development.

This approach preserves the rapid iteration that made low-code attractive while maintaining:

- architectural control

- integration flexibility

- long-term maintainability

- deployment portability

The long-term pattern is clear. Application generation is shifting from proprietary visual builders to AI-driven creation of real, maintainable software.

Low-code brought application creation to non-developers through platforms. AI is bringing application creation to both developers and business users without requiring those platforms.

Zero-Trust Security: The Fastest-Growing Enterprise Priority

Zero-Trust Component Implementation – 2026

| Zero-Trust Component | Implementation Rate | Projected 2028 | Driver |

|---|---|---|---|

| Identity & Access Management | 68%⁹ | 85%⁹ | Compliance, breach prevention |

| Network Microsegmentation | 44%⁹ | 71%⁹ | Lateral threat containment |

| Continuous Authentication | 39%⁹ | 68%⁹ | Remote workforce security |

| Encryption at Rest & Transit | 72%⁹ | 93%⁹ | Data privacy regulations |

What This Means

Zero-trust adoption is accelerating, but maturity remains low. Gartner notes that only 10% of large enterprises will have mature, measurable zero-trust programs by 2026¹, despite more than 80% planning adoption. This gap between intent (81% planning implementation) and mature execution (10% achieving it) reveals that zero-trust remains operationally complex despite widespread recognition of its importance.

Implementation is uneven.

Foundational controls such as encryption at rest and transit (72%) and identity and access management (68%) lead because they can be implemented incrementally without full system redesign.

More advanced capabilities such as network microsegmentation (44%) and continuous authentication (39%) lag behind because they require more deep integration with application architecture, service communication patterns, and runtime identity.

Investment through 2028 is shifting toward these harder capabilities. The projected growth in network microsegmentation (from 44% to 71%) and continuous authentication (from 39% to 68%) shows that organizations are moving beyond foundational controls toward more sophisticated, defense-in-depth approaches. The fastest growth areas align with remote workforce security needs and lateral threat containment in complex, distributed environments.

The biggest challenge is integrating zero-trust principles into legacy systems and complex, multi-cloud environments where traditional perimeter-based security assumptions are embedded in application architecture. Organizations face expensive rewrites or complex workarounds to retrofit zero-trust controls into systems that were designed assuming network-level trust.

In Practice

Zero-trust adoption is most effective when it is scoped to the highest-risk systems first, such as customer-facing applications, payment platforms, and regulated data stores, rather than positioned as an immediate enterprise-wide transformation.

Keyhole typically helps clients start here: implement identity-based access and least-privilege controls for those critical flows first, then expand. This delivers measurable risk reduction quickly while avoiding the organizational change management burden of trying to transform everything simultaneously.

Another consistent pattern is applying zero-trust principles in greenfield and modernized applications while legacy systems remain on perimeter-based security with compensating controls. Modern cloud-native services are built with identity-first access from the start, while legacy platforms receive enhanced monitoring and defensive layers until they are rearchitected or retired. This allows organizations to improve their security posture continuously without forcing high-risk rewrites.

At Keyhole Software, we help clients balance zero-trust adoption with modernization roadmaps, because security architecture and application architecture are interdependent. Systems are modernized in priority order, with zero-trust controls implemented during that evolution rather than retrofitted afterward. This increases security maturity without disruptive enterprise-wide transformation.

Remote Development: Already Normalized, Continuing to Evolve

Remote and hybrid delivery models are now a permanent operating baseline for software engineering. The 2025 Stack Overflow Developer Survey found that 32.4% of developers are fully remote, with an additional 50% working in a hybrid model³, meaning more than four out of five teams are no longer office-bound. The strategic question is no longer whether remote work is viable, but how to structure distributed teams for sustained productivity, architectural alignment, and knowledge continuity.

Remote Work Model Adoption – 2026

| Remote Work Model | Current Adoption | Productivity Rating | Key Challenge |

|---|---|---|---|

| Fully Remote Teams | 42%¹⁰ | 4.1/5.0¹⁰ | Communication overhead |

| Hybrid (2-3 days office) | 39%¹⁰ | 4.3/5.0¹⁰ | Coordination complexity |

| Office-First (optional remote) | 14%¹⁰ | 4.0/5.0¹⁰ | Talent pool limitations |

| Distributed (no central office) | 5%¹⁰ | 3.8/5.0¹⁰ | Cultural cohesion |

What This Means

With 81% of developers working remotely or in hybrid structures, distributed delivery is now the default operating model. Growth to 86% by 2028 indicates equilibrium rather than acceleration.

The ratings show hybrid teams performing highest (4.3/5.0), with fully remote close behind (4.1/5.0), reinforcing that periodic in-person alignment still matters for complex work. The tradeoffs are structural: fully remote increases communication overhead, hybrid adds coordination complexity, office-first restricts the talent pool, and fully distributed models struggle most with cultural cohesion and informal knowledge transfer.

The long-term pattern is clear. Remote work is no longer a policy decision. It is an organizational design problem.

In Practice

High-performing distributed teams are structured for asynchronous execution rather than trying to replicate office behavior through meetings. They define clear decision ownership and minimize blocking dependencies so progress does not depend on real-time coordination.

Effective teams define when synchronous collaboration is required and when asynchronous updates are the default. They treat documentation as a primary delivery artifact that preserves context, accelerates onboarding, and reduces repeated discussion.

Since 2008, Keyhole has delivered in fully remote, hybrid, and on-site client environments. Our architect-led model preserves technical continuity and reduces the communication overhead that often slows distributed programs.

For organizations building internal distributed teams, the most consistent success pattern is a hybrid model in which in-person time is reserved for architectural planning, domain alignment, and relationship development, while implementation work is performed in a distributed environment. This preserves the productivity benefits of remote work while maintaining the collaboration advantages required for complex systems.

Keyhole’s delivery teams integrate directly into client communication channels, operate during client business hours, and provide real-time availability without timezone friction. This model combines the responsiveness of co-located teams with the talent access advantages of distributed delivery, avoiding the delays and context loss that often occur in offshore remote structures.

Software Development Trends: Predictions for 2027-2028

Based on current adoption curves and direct enterprise delivery experience, the next phase of software development in 2027-2028 will be defined less by tool availability and more by how organizations restructure teams, platforms, and governance to absorb that capability at scale.

1. AI Pair Programming Becomes Standard Practice

By 2028, we expect more than 65% of developers to use AI coding assistants daily, up from 51% today. The shift is not simply higher usage. It is a fundamental redistribution of work.

AI will handle routine implementation, test scaffolding, and pattern replication, while senior engineers concentrate on architecture, system boundaries, validation, and complex domain logic. The productivity gap between teams that integrate AI into governed delivery workflows and those that do not will widen significantly.

The competitive advantage will not come from access to AI tools. It will come from the ability to operationalize AI inside experienced, architect-led teams with clear quality gates. Organizations that treat AI as augmentation will see compounding throughput gains. Those that treat it as headcount replacement will accumulate technical debt that reduces long-term velocity.

As we often say, “AI won’t replace developers, but developers who use AI will replace those who don’t.

2. Platform Engineering Emerges as Distinct Discipline

As the complexity of cloud-native environments grows, we expect to see the rise of platform engineering as a discipline separate from DevOps. Gartner predicts that by 2026, 80% of large software engineering organizations will establish platform teams to build and manage internal developer platforms (IDPs).¹¹

Internal developer platforms will move from collections of CI/CD and monitoring tools to fully integrated, self-service environments that allow application teams to provision infrastructure, deploy services, and access operational capabilities without deep infrastructure expertise.

This changes how engineering organizations scale. The constraint will no longer be infrastructure knowledge. It will be the quality of the internal platform experience. And for organizations adopting AI and multi-cloud at scale, internal platforms become the control point for governance, cost management, and developer experience.

3. AI Governance and Regulatory Compliance Become Delivery Requirements

By 2027–2028, AI compliance will move from a legal concern to a core software delivery requirement. Regulations such as the EU AI Act will force organizations to provide traceability for training data, model behavior, decision logic, and bias testing for systems that impact users.

We expect to see a dedicated ecosystem of tools and services emerge around AI governance, risk, and compliance. This will create a new class of delivery artifacts: AI audit trails, explainability workflows, and model governance pipelines. These capabilities need to be designed into the architecture from the beginning, as retrofitting them later will be significantly more expensive and fragile.

Organizations building AI-powered applications without compliance frameworks will face regulatory penalties and market access restrictions, particularly in regulated industries and European markets.

4. WebAssembly (Wasm) Adoption Accelerates

We predict that WebAssembly will see significant adoption for performance-critical applications, with 25% of new enterprise applications using Wasm by 2028.¹² Its secure, sandboxed, language-agnostic execution model makes it particularly suited for edge computing, client-side processing, and high-performance browser workloads.

The strategic impact is architectural flexibility. WebAssembly’s language-agnostic execution model allows organizations to reuse existing C++, Rust, or Go code in web and mobile contexts without rewrites, accelerating development for performance-critical components.

Wasm will not replace containers, but it will become a standard option for specific classes of workloads where startup time, isolation, and cross-platform execution matter. Early adopters are already using Wasm for image processing, cryptographic operations, and real-time data processing where JavaScript performance is insufficient.

5. Quantum-Resistant Cryptography Implementations Begin

While quantum computers capable of breaking current encryption remain 5–10 years away, forward-thinking enterprises will begin planning quantum-resistant cryptography transitions in 2026–2027. The driver is data with long confidentiality requirements: financial records, intellectual property, and healthcare data encrypted today that must remain secure for decades.

This isn’t a simple library upgrade. Post-quantum algorithms have larger key sizes, different performance characteristics, and integration requirements that demand application-level architectural changes and multi-year migration planning.

Regulated industries will prioritize systems handling high-value, long-lived data first. The challenge will be balancing forward-looking crypto upgrades with immediate delivery priorities as teams will need to implement crypto-agility patterns that allow algorithm rotation without platform rewrites.

How Keyhole Software Helps Organizations Navigate Software Development Trends

For more than 17 years, Keyhole Software has helped enterprise organizations modernize legacy platforms, evaluate emerging technologies, and adopt new capabilities at the point where they deliver measurable business value. Our teams are composed of senior, U.S.-based consultants who lead with architecture, enabling clients to move forward without introducing unnecessary delivery risk.

Our work consistently centers on the intersection of modernization, AI adoption, and secure cloud-native delivery.

Legacy System Modernization and Integration

Modernization of long-lived systems is the foundation that makes every other trend achievable. We specialize in transforming legacy platforms, including COBOL and mainframe-based systems, into architectures that can support cloud deployment, API integration, and AI-driven capabilities. Using phased approaches, we preserve critical business logic, maintain operational continuity, and expose core functionality as services that can evolve over time.

AI, RAG, and Agentic Delivery

We deliver governed AI solutions that operate inside enterprise security boundaries, including retrieval-augmented generation systems that allow large language models to interact with internal data without exposing sensitive information externally. AI is implemented as an acceleration layer within architect-led teams, increasing throughput while maintaining enterprise control.

For organizations moving beyond AI-assisted development, we implement agentic delivery patterns that automate multi-step implementation, testing, and documentation workflows while keeping architectural decisions and security-sensitive logic under senior oversight. This increases throughput without transferring long-term quality risk to ungoverned automation.

Cloud-Native and Hybrid Platform Architecture

Cloud adoption follows modernization, not the reverse. We help clients move to AWS and Azure through phased, hybrid strategies that keep core systems stable while new capabilities scale in cloud-native environments. Identity-based access, service-to-service authentication, and policy enforcement are built into these platforms from the start, aligning cloud architecture with zero-trust operating models and regulatory requirements. Architectures are designed for portability where it creates leverage and for managed services where they provide clear operational or economic advantage.

Example: AI-Accelerated Platform Modernization in Insurance

For a repeat insurance client, Keyhole led the architecture and delivery framework for a full platform replacement. By combining phased modernization with an AI-accelerated, architect-led delivery model, the team reduced the expected 18–24 month timeline to approximately five months while maintaining production stability. Intent-driven development was also a key component of the AI-accelerated approach. The new cloud-native platform removed legacy scaling constraints, improved performance, and enabled the introduction of AI-driven capabilities without another rebuild.

A Delivery Model Aligned to Technology Roadmaps

The primary risk for most enterprises is not choosing the wrong technology, it’s adopting the right technology on top of an architecture that cannot support it.

Keyhole helps organizations sequence modernization, cloud adoption, AI integration, and security transformation so that each investment enables the next. The objective is not to chase individual trends, but to build platforms that can absorb new capabilities without repeated replatforming.

Organizations planning their 2026–2028 technology roadmaps work with Keyhole to translate industry adoption data into executable architecture, combining hands-on delivery experience with long-term perspective on which technologies create durable advantage and which introduce temporary complexity.

Requesting More Information on Software Development Trends

Organizations that align trend adoption to a clear delivery strategy will outperform those chasing individual tools.

If you would like to discuss how these software development trends apply to your organization’s technology roadmap, or learn more about Keyhole’s custom software development services, contact our team.

References

- Gartner Identifies the Top Strategic Technology Trends for 2026

- The State of AI in 2025

- 2025 Stack Overflow Developer Survey

- DORA: State of AI-assisted Software Development 2025

- GitHub Copilot Statistics & Adoption Trends [2025]

- 100+ Cloud Computing Statistics for 2026

- CNCF and SlashData Survey Finds Cloud Native Ecosystem Surges to 15.6M Developers

- Gartner Forecasts Low Code/No Code Platform Market for 2026

- Why 81% of organizations plan to adopt zero trust by 2026

- 80% of Software Engineers Will Keep Working Remotely

- Platform Engineering in 2026: Why DIY Is Dead

- WebAssembly gaining adoption ‘behind the scenes’ as technology advances

Last updated: March 10, 2026

More From Keyhole Software

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.