To demonstrate what governed agentic AI development looks like in practice, we implemented an autonomous delivery loop and used it to build a complete full-stack timesheet application in 19 traceable iterations. The goal was not to ship a timesheet product. The goal was to validate a repeatable, enterprise-ready execution model where every increment of progress is test-gated, observable, and auditable.

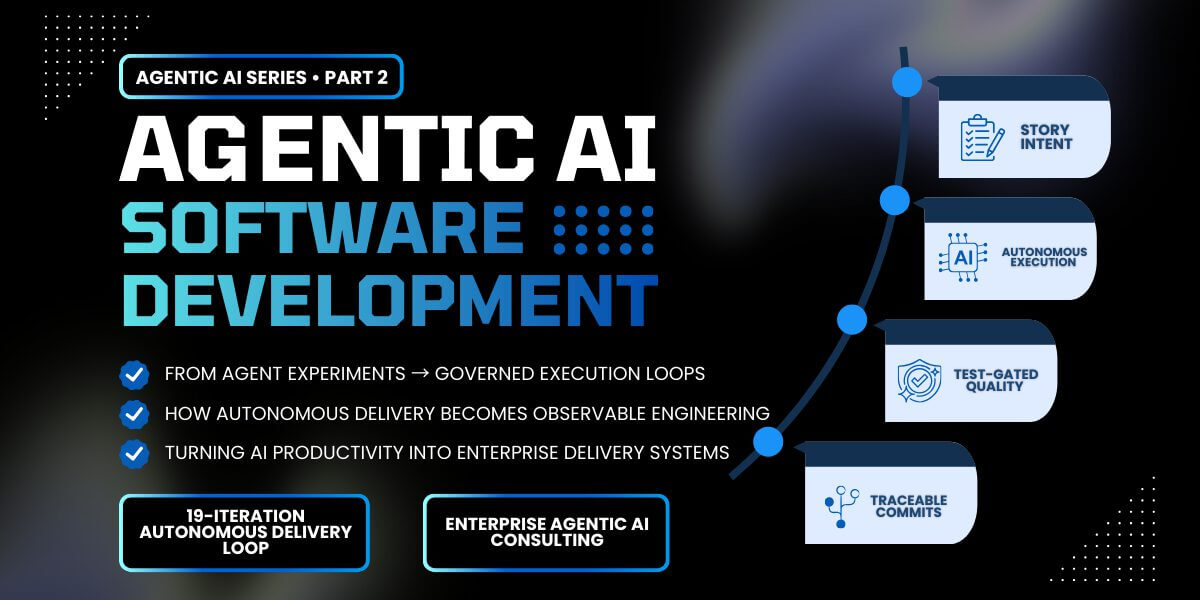

Agentic AI development is a software delivery model where autonomous agents execute development tasks inside a governed SDLC, operating within architectural guardrails, dependency-ordered backlogs, and test-gated workflows.

In our previous article on agentic AI software development in the enterprise, we introduced a governed delivery model where autonomous agents execute work inside architectural guardrails and test-gated SDLC workflows. This post demonstrates what that model looks like when applied to a real implementation.

In this post, you’ll see:

- How an autonomous AI delivery loop executes work in a controlled enterprise SDLC

- What 1:1 story → test → commit traceability looks like in practice

- How architectural conventions and dependency-ordered backlogs govern autonomous execution

- How AI-accelerated development can remain observable, test-gated, and auditable

This reference implementation provides a transparent, end-to-end example of agentic AI development operating as a controlled delivery system. It combines repository-integrated autonomous execution, enforced architectural conventions, and a full story-to-commit audit trail.

Key characteristics of the reference implementation on GitHub include:

- 19 dependency-ordered user stories

- 1:1 story → test → commit traceability

- Enforced architectural conventions

- Fully test-gated execution

We defined the architecture and conventions, sequenced work in a dependency-ordered backlog, and let a persistent delivery agent execute one story at a time with tests acting as the quality gate. In the reference implementation, execution was governed by three control artifacts that enforced sequencing, standards, and repeatability.

From Concept to Execution

In Part 1 of this series, we introduced the concept of governed agentic AI development, a delivery model where autonomous agents execute work inside architectural guardrails, dependency-ordered backlogs, and test-gated workflows.

This article shows what happens when that delivery model is allowed to execute against real development work.

To evaluate the model in practice, we implemented an autonomous delivery loop and executed it against a dependency-ordered backlog of real development work. The result is a transparent reference implementation showing how agentic AI development behaves inside a governed enterprise SDLC.

The system was intentionally designed to keep execution simple and observable. We defined the architecture and development conventions up front, sequenced work in a dependency-ordered backlog, and allowed a persistent delivery agent to execute one story at a time.

Three control artifacts governed this process:

- Architectural conventions

- The dependency-ordered backlog

- The execution prompt used by the delivery loop

Together, these artifacts ensured that autonomous execution remained predictable, test-gated, and aligned with architectural standards.

The Autonomous Agentic AI Development Loop in Practice

At the center of the system is a persistent autonomous execution loop that repeatedly evaluates the backlog, implements the next unit of work, validates the result through automated tests, and commits the completed change.

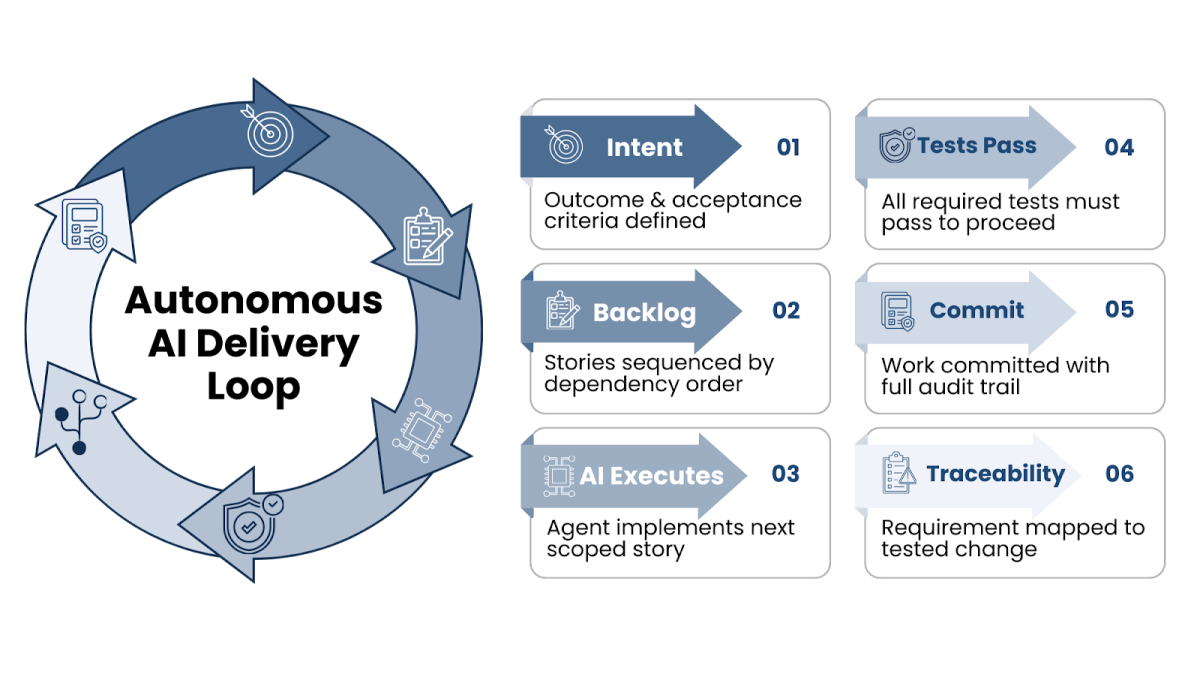

Autonomous agentic delivery loop: a persistent execution cycle where dependency-ordered work, test-gated validation, and commit-level traceability produce observable, governed progress.

In practice, the loop followed a fixed and repeatable workflow:

- Fixed architecture and conventions defined how the code had to be structured

- A dependency-ordered backlog controlled what could be built next

- The delivery agent executed one story at a time inside those guardrails

- Tests acted as mandatory quality gates; failing work never committed

- Each iteration produced a small, reviewable, auditable change

This created a measurable unit of progress for every cycle: a completed requirement, a passing quality check, and a traceable commit.

Rather than measuring delivery progress through status updates or documentation artifacts, progress becomes directly visible in the repository through working software, passing tests, and commit history.

This structure reflects the principles of intent-driven development, where clearly defined engineering intent guides autonomous implementation rather than relying on ad hoc prompting.

The Three Control Artifacts That Governed Execution

The autonomous loop was driven by three core control artifacts that enforced sequencing, standards, and repeatability. These artifacts are what make governed AI delivery repeatable rather than experimental. This type of structured execution model closely aligns with modern platform engineering practices, where architectural standards, delivery workflows, and automation are codified into the development platform itself.

Architectural Conventions as the Control Layer

Project conventions defined the architecture and development standards. The agent operated inside a predefined technical stack, layered design, DTO patterns, validation rules, and testing expectations. This ensured every implementation followed the same patterns a senior engineering team would enforce in a traditional delivery model.

Dependency-Ordered Backlog for Predictable Delivery

User stories were sequenced in dependency order and executed one at a time. The agent could not skip ahead, invent new work, or partially complete a feature. This preserved delivery flow and made progress observable at any moment.

The Persistent Execution Loop

On every iteration, the agent followed the same cycle: locate the next story, implement it according to the standards, run the tests, mark it complete, and commit. If the tests failed, the story was not done.

This is how AI moves from isolated developer productivity gains to a scalable enterprise software delivery capability.

Governance Guardrails: How Agentic AI Teams Keep Control

One of the most common concerns we hear from enterprise teams evaluating agentic AI software development is control. Speed is easy to demonstrate in a prototype. Maintaining governance, architectural integrity, and auditability at that speed is the real challenge.

In this model, the guardrails were not added after the fact. They were designed into the execution loop from the beginning.

Tests As Mandatory Quality Gates

Tests acted as mandatory quality gates.

No user story was considered complete until the relevant backend and frontend tests passed. This ensured that velocity never bypassed validation.

Commit History as a Built-In Audit Trail

Commit history became a delivery control mechanism.

Each completed story produced a conventional commit with a direct, one-to-one relationship between requirement and implementation. That created a built-in audit trail that can be reviewed at any point in time.

Permission-Scoped Autonomous Execution

Execution permissions were intentionally scoped.

The agent was allowed to run only the commands required to build, test, and commit. That constraint prevented uncontrolled behavior while still enabling fully autonomous progress.

Making Governance Executable

The result is a model where:

- Every capability is traceable to a requirement

- Every change is tied to a passing quality check

- Every iteration is reviewable in the commit history

What 19 Iterations Actually Produced

The reference implementation demonstrates the delivery pattern in a fully transparent form:

- 19 dependency-ordered user stories

- 1:1 story → test → commit traceability

- Enforced architectural conventions

- Fully test-gated execution

This creates a working example of governed AI delivery that can be applied directly to enterprise legacy modernization initiatives, where AI-accelerated delivery must still maintain architectural discipline and traceability.

Real-World Impact: From Experiment to Enterprise Modernization

In enterprise modernization efforts, we consistently see the same challenge: legacy systems slow iteration, documentation is outdated, and delivery cycles stretch into months. Introducing AI without structure increases risk. Introducing AI within a governed AI delivery model compresses timelines.

In one modernization effort for a repeat insurance enterprise, a program originally estimated at 18–24 months was delivered in approximately five months by combining architectural leadership with agentic, test-gated execution inside a governed SDLC. The key was not simply using AI; it was designing the delivery system around it.

On recent engagements, we have seen:

- Significant reductions in upfront specification effort

- Faster feedback loops between product and engineering

- Clearer traceability from requirement to release

- Improved knowledge transfer through structured commit history and artifacts

Enterprise AI adoption often stalls when organizations add tools but keep the same SDLC. Without changes to governance, sequencing, and quality gates, AI becomes a local productivity boost instead of a delivery multiplier. A structured AI-accelerated SDLC turns AI into a scalable modernization capability that maintains compliance, architectural integrity, and long-term maintainability.

This is the model we are now implementing in enterprise AI modernization, internal platform engineering, and net-new product delivery engagements.

Key takeaway: Agentic AI development becomes enterprise-ready only when autonomous execution operates inside architectural guardrails, dependency-ordered backlogs, and test-gated delivery systems.

Why This Still Requires Senior Consulting Leadership

Autonomous execution does not eliminate the need for senior engineers. Successful enterprise AI development initiatives still depend on experienced architects to design the systems, guardrails, and delivery models that guide autonomous execution. Governed AI delivery shifts their impact from writing code to designing the systems that guide autonomous execution.

Architectural judgment, domain modeling, integration strategy, platform alignment, and governance design determine whether AI-accelerated delivery produces a maintainable enterprise system or a fast-moving prototype that cannot scale.

This is where experienced consulting teams create the highest value: defining the guardrails, sequencing delivery intentionally, and aligning execution to long-term modernization strategy.

Series Note

This post is part of an ongoing series exploring how agentic AI delivery systems are changing enterprise software development. In the first article, we introduced the concept of governed agentic SDLCs, where autonomous agents operate within architectural guardrails, test-gated workflows, and observable delivery pipelines.

If you haven’t read it yet, Part 1 covers the foundations of this model and why enterprise teams need structured governance when introducing autonomous development:

What Is Agentic AI Software Development in the Enterprise? →

In our next article, Part 3, we walk through how the autonomous execution loop runs in practice and what it looks like when an agent executes real development tasks inside a governed SDLC:

Agentic AI Delivery in Practice: Autonomous Enterprise Execution with the Ralph Loop →

More From Jaime Niswonger

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.