Remember when Netflix first came out with its suite of distributed components? It included Eureka for service discovery, Hystrix for circuit breaking, and Zuul for intelligent routing. Netflix was running on an AWS infrastructure back then, but the infrastructure didn’t exist for Netflix to manage its microservice ecosystem. The industry has come to describe the Netflix components within the larger context of a service mesh.

AWS recently introduced App Mesh, a highly-available set of services that integrate with the AWS ecosystem and provide the capabilities Netflix was looking for back in the day.

In this post, we provide an introduction to AWS App Mesh and show a quick tutorial of bringing a reference microservice into an AWS App Mesh.

App Mesh

AWS App Mesh is a tool that provides consistent visibility and network traffic controls for every microservice in an application. Its goal is to use simple and consistent abstractions to make it easier to manage microservices deployed across accounts, clusters, and container orchestration tools.

It is implemented as an AWS service, along with “sidecar proxies” based on [open source] Envoy that are packaged alongside each microservice. At the crux of it, App Mesh essentially separates the logic needed for monitoring and controlling communications into a proxy that runs next to every microservice.

In the diagram below (credit: Introducing AWS App Mesh), the App Mesh/Envoy sidecar is represented in orange. The microservice code does not need to change in order to participate in the Mesh. The integration with App Mesh is done via service configuration.

Image Credit: Introducing AWS App Mesh

App Mesh supports microservice applications that use service discovery naming for their components. It works independently of any particular container orchestration system and currently works with both Kubernetes and Amazon ECS.

Deeper Dive

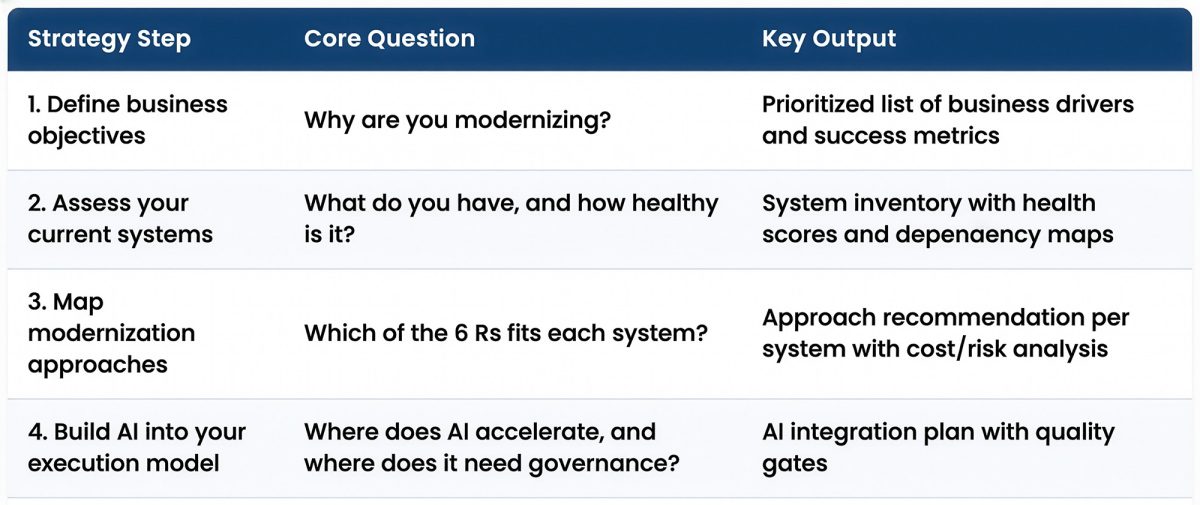

There are five constructs upon which App Mesh is built:

- Service Mesh

- Virtual Service

- Virtual Node

- Virtual Router

- Route

Image Credit: Introducing AWS App Mesh

A Service Mesh is a logical boundary for network traffic between the services that reside within it. After you create your Service Mesh, you can create Virtual Services, Virtual Nodes, Virtual Routers, and Routes to distribute traffic between the applications in your mesh.

A Virtual Service is an abstraction of a real service that is provided by a virtual node (directly or indirectly) by means of a virtual router. Dependent services call your virtual service by its virtualServiceName, and those requests are routed to the virtual node or virtual router that is specified as the provider for the virtual service.

A Virtual Node acts as a logical pointer to a particular task group, such as a Kubernetes deployment or an Amazon ECS service.

Any inbound traffic that your virtual node expects should be specified as a Listener. Any outbound traffic that your virtual node expects to reach should be specified as a Backend.

Virtual Routers handle traffic for one or more Virtual Services within your mesh. After you create a Virtual Router, you can create and associate Routes for your Virtual Router that direct incoming requests to different Virtual Nodes.

A Route is associated with a Virtual Router. It is used to match requests for a Virtual Router and distribute traffic accordingly to its associated Virtual Nodes.

(Want more specifics? Read the official documentation here.)

Bringing a microservice into an AWS App Mesh

Next, we’ll go through bringing a microservice into an App Mesh. Our reference microservice is the KHS Employee service. It is important to note that the service does not require any changes, or even to be aware that it is deployed in an App Mesh.

First, the service is built into a Docker container and stored in a Docker registry, as usual. For AWS, the Docker container would likely be stored in Elastic Container Registry (ECR).

Next, create an Elastic Container Service (ECS) service.

In the course of creating the ECS service, the Docker image will be decorated with the configuration required by AWS. This includes:

- an ECS cluster, spread across multiple availability zones,

- the CPU/memory to use with the Docker image,

- any IAM permissions to be granted to the service, and

- data volumes to be mounted to the Docker image.

At the completion of ECS service creation, the Docker image is highly-available, able to scale horizontally, and integrated with CloudWatch.

Finally, integrate the ECS Service with the App Mesh.

To integrated an ECS Service into App Mesh, create an App Mesh Virtual Node and provide the link to the ECS Service. Also, configure any ingress (Listeners) and egress (backends) collaborators for the ECS Service. Listeners are typically just protocol translators such as an HTTP Listener. Backends are typically other ECS Services (microservices) or databases. Virtual Routers and Routes can be configured as needed to complete the configuration.

Once completed, the ECS Service will be modified to contain the sidecar Docker image required to handle App Mesh communication.

How AWS Implemented the Netflix Components

Coming full circle, here is how AWS implemented the Netflix components in the core infrastructure:

Eureka / service discovery: each Virtual Node must identify itself via a local DNS name which is referenced by dependent services. The local DNS name is distributed to App Mesh; when other services look for that service name, App Mesh will provide access.

Hystrix / circuit breaking: Application Health Checks and High Availability are baked into an ECS or EKS cluster, but not for EC2. According to the App Mesh roadmap, AWS will implement a Circuit Breaker Policy, but it’s unclear to me how valuable this will be if deploying into an ECS or EKS cluster. In these cases, rather than opening the circuit, the cluster will just replace the unhealthy instance.

Zuul / intelligent routing: implemented with the Virtual Router. Traffic is routed to Virtual Nodes based on protocol and URI path. In addition, Virtual Node routing can be weighted, sending 10% to Virtual Node A and 90% to Virtual Node B. This can be useful when deploying a new version of a microservice using a Canary Deployment.

Wrap Up

Although I focused on the ECS use case, there are other implementation options available for deploying microservices into App Mesh. For example, the microservice can be deployed on an EC2 instance instead of into an ECS cluster. Kubernetes pods are also a supported configuration. In each of these scenarios, the touchpoint is the App Mesh construct called the Virtual Node.

I hope that you found this helpful. If you’d like to learn more, here are a handful of resources to get you started:

AWS Online Tech Talk – April 23 | App Mesh Service Deep Dive

“Join us to learn about how AWS App Mesh can help give you end-to-end visibility and manage traffic routing to ensure high availability for your services. We will cover the capabilities of App Mesh, how to use it with AWS, partner, and community tools, and how to get started using App Mesh.

Learning Objectives

- Learn what App Mesh is and how it works

- Learn how to use App Mesh for monitoring services running on ECS, EKS, Fargate, and EC2

- Learn how to use App Mesh to route traffic between services”

- Register: https://pages.awscloud.com/AWS-Online-Tech-Talks_2019_0405-CON.html

Further Reading

Announcement blog post

https://aws.amazon.com/blogs/aws/aws-app-mesh-application-level-networking-for-cloud-applications/

Introduction from reInvent 2018

- https://www.youtube.com/watch?v=GVni3ruLSe0

- https://www.slideshare.net/AmazonWebServices/new-launch-introducing-aws-app-mesh-service-mesh-on-aws-con367-aws-reinvent-2018

Sample App Mesh Applications

https://github.com/aws/aws-app-mesh-examples/tree/master/examples

A medium article that walks through the sample app: https://medium.com/@chris.rimondi/aws-app-mesh-first-take-f959b7d8430b

Disclosure

We would like to point out that we are a certified AWS Partner (Standard Tier Consulting Partner). We have numerous partnerships with different industry leaders, like AWS and Microsoft, in an effort to provide our clients with the best education and services.

That said, Keyhole Software is technology agnostic; we will always use the best tool for the project.

More From Brad Flood

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.