(One of these graphics seems to accompany web Apple Silicon M1 material. Here, they’re a replaceable placeholder for the splash-banner elves.)

Introduction

In June, Apple announced a two-year transition from Intel to Apple Silicon for the iMac and MacBook line. I knew Apple had lost their mind. But, before Christmas, I owned my very own chunk of Apple Silicon living in an attractive milled-aluminum case.

In this article, I’ll discuss the Apple M1 silicon-on-a-chip (SoC) used as a software development computer. I’ll cover installation, and I’ll also talk through running apps that support development on my M1. These apps are listed below.

- FileZilla

- Homebrew

- Yarn, Node, Git, Java, SSL, Python …

- JetBrains IDEs

- VS Code

- Dashlane password manager

- Parallels Toolbox

- Parallels Desktop

- Windows 10 Pro

- Ubuntu Server

- Docker containers

- GPU — Apple’s deferred lighting rendering example

- Neural Engine classifier

- Affinity Publisher

- FireFox and Chrome

- iCloud

Life after Intel?

I use an Intel I7 MacBook Pro to develop code via third-party apps. It hosts a Windows VM as well as an Ubuntu VM.

Was it sunset for my Intel Mac? I assumed that the Apple Silicon machines would use slow Intel emulation. Would there be no near-term containers or virtualization?

macOS 11 Big Sur

In early November, I installed the major release of macOS Big Sur on that Core i7 MacBook. The OS included evolutionary changes.

Sharp corners on icons and windows gave way to soft radiuses. There was a new iOS-like control center. My email no longer sorted by clicking on the inbox heading. Notwithstanding, Big Sur seemed okay on Intel chips.

Ten days later, Apple released three Apple Silicon M1 machines.

Apple Silicon M1 Reviews

I saw positive M1 reviews. Benchmarks showed a $700, 8 GB Mac mini M1 mostly besting an expensive 16″ Core I9 MacBook Pro in Final Cut Pro video processing performance. Apple Silicon vs Intel Apple. Apples compared to Apples.

Could I use an M1 to make and test software? I read slick marketing on Apple’s Mac pages. I pored over specs. I viewed the Mac mini M1 page on my iPhone; it projected a Mac mini M1 onto my lap via AR.

Cute.

The clincher? Price. An 8 GB Mac M1 costs $700. Not bad for a Mac!

Purchase

The $700 seemed a fair price for an M1 trial, and Apple would let me return it if it flunked.

Two days after Thanksgiving I clicked an 8 GB Mac mini M1 to my doorstep. That was just seven days after initial M1 availability. I didn’t open it until Christmas.

In the meantime, I pored over material about RISC, ARM64, and three M1 offerings. The machine was calling to me.

But what about the specs?

Apple M1

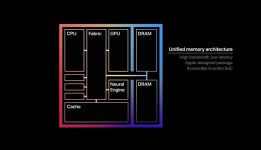

An Apple M1 has the following characteristics:

- System on a Chip (SoC) — cores share RAM with custom Apple engines

- 8 MB or 16 MB RAM baked into a system package with SoC; no RAM upgrade possible

- ARM64 RISC – 8 cores

- 4 Efficiency cores codenamed Icestorm

- 1/10 the power drain of the faster cores

- cache: 128 KB L1 instruction, 64 KB L2 data, 4 MB L2

- 4 Performance cores (3 for MacBook Air M1) codenamed Firestorm

- cache: 192 KB L1 instruction, 128 KB L2 data, 12 MB L2

- 4 Efficiency cores codenamed Icestorm

- Decoders — fixed-width RISC instructions simplify multiple decoders for out-of-order execution

- OoOE: read this article

- 7-nanometer process — 20 was common

- Added acumen per acre

- Faster furlongs per fortnight

- Shorter stretches to span

- Coprocessors

- Apple’s custom Neural Engine

- 16 cores

- 11 trillion operations per second

- Apple-designed GPU

- 8 cores having 8 execution units (EUs) per core, each EU having 8 ALUs per EU

- total 128 EUs

- total 1024 ALUs

- 25,000 simultaneous threads

- 2.6 TFLOPS floating-point performance

- 8 cores having 8 execution units (EUs) per core, each EU having 8 ALUs per EU

- Security processor— security integrated into the hardware, like iPhones and iPads

- Shared matrix multiplication coprocessor

- Apple’s custom Neural Engine

Apple’s ARM64 cores implement a paid licensed specification from ARM. Apple placed 8 ARM cores and 3 in-house coprocessors into one SoC, all with shared access to the main RAM in a package.

How would my Intel-based applications fare?

Universal Apps + Rosetta

I remembered early dual Power PC-Intel apps. Apple’s earlier OS/X contained an unobtrusive translator named, Rosetta. Apple had migrated their product line from Power PC OS/9 apps to Intel OS/Xl.

Their applications were Universal Apps. A Mac app is a bundle of code files and resources. A Universal App contains code from dual architectures within its bundle. An OS instance knew its booted architecture. It loaded the code for that architecture instance.

That didn’t help an Intel Mac user who downloaded a third-party app with only Power PC bits in its bundle. There was a translator for that.

Apple’s OS/X included an invisible bridge named Rosetta that could quickly transform a third-party Intel Power PC app into an Intel rendition.

I once had such a Mac: a plastic white 2006 Intel-based MacBook that could load and execute a Power PC app. (The machine also fused itself to a hotel’s polyester bedspread because it ran quite hot.) Anyway, I saw no hesitancy in loading a Power PC app.

For the present-day M1 transition, Apple packed a Rosetta 2 Intel-to-M1 bridge into Big Sur.

I stepped through the expected MacOS initialization wizard in my M1. It logged it into iCloud. I tried many of the Big Sur apps in its Launchpad. They were snappy with no surprises. I soon forgot I was running Intel on ARM64 architecture.

Apple excels at migrating a product line into a new architecture while minimizing disruption.

I began installing third-party development apps.

FileZilla

For my first third-party application, I installed FileZilla from a dmg file. Big Sur popped the following one-time confirmation box.

That’s Rosetta 2. I clicked the install button. That’s the last I ever saw of that popup.

FileZilla worked perfectly with scant delay on its Rossetta pass. Afterward, any normal Intel-based apps worked perfectly. They didn’t seem slow (I’m slow).

The Apple App Store has a growing native Apple M1 section. None of my little toolchain apps are there. I call those in from the wild.

Homebrew

I prefer Homebrew for installing and managing command-line toolchain packages. Homebrew is special. You can normally install it from a curl command. However, it seems that that’s not the case here. My initial attempt at installation failed.

I found a hint: duplicate the Big Sur Mac Terminal app, and then set “Open using Rosetta” in the clone’s GetInfo popup. I installed Homebrew from that cloned Terminal app.

Thereafter, I would type “brew” into that Rosetta-blessed Terminal to install, upgrade, list, or remove Homebrew packages.

That opened floodgates.

Yarn, Node, Git, Java, SSL, Python … oh my!

Toolchain Installation went swimmingly – thanks to Homebrew executing in that Rosetta 2 Terminal clone. Here’s the brew installed list:

mauget@Louiss-Mac-mini ~ % brew list autoconf gettext ncurses pcre2 tcl-tk docker git node [email protected] tree docker-machine icu4c openjdk readline xz gdbm kubernetes-cli [email protected] sqlite yarn atom multipass mauget@Louiss-Mac-mini ~ %

Did you notice Docker in there? See the later section named Docker Tech Preview.

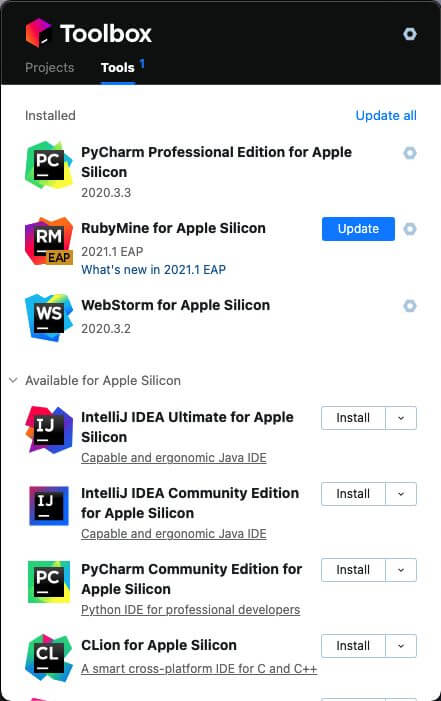

I have a JetBrains license. Could I install its Toolbox app for launching and maintaining my raft of JetBrains IDEs?

JetBrains

I installed JetBrains Toolbox using its drag-to-Applications dmg file. The Toolbox GetInfo showed Kind: Application Intel. Rosetta 2 was doing its job. I had no issues installing WebStorm from a click within the Toolbox.

I set my SSO public cert into WebStorm. I added settings refs to my new yarn, git, node, eslint, and others.

In WebStorm, I pulled one of my React applications from GitHub and ran yarn install from its local terminal followed by yarn start. The application UI into my default browser.

Okay, I could write and test code on an M1 via invisible help from Rosetta.

JetBrains Apple Silicon

A month after my initial go at JetBrains, the Toolbox replaced its contents with IDEs labeled for Apple Silicon. The world advanced another step toward Apple Silicon.

I had to reactivate them, but they worked properly. The prognosis for using M1 machines for development grew.

How about Visual Studio Code?

Visual Studio Code Insiders for ARM64

I installed Intel-based Visual Studio Code invisibly helped by Rosetta 2. I built the React project that I’d used in WebStorm above. No issues.

Soon, the Microsoft Insiders program added “Visual Studio Code Insiders for ARM64” to the ARM bandwagon. I headed to an Insiders Download for ARM64, installed it, chose a nice set of extensions, cloned my React project, and ran it on the project’s React dev server.

No issues. The IDE was snappy and lively.

Everything was excellent, but I was tired of pasting passwords across a shared desktop from my MacBook.

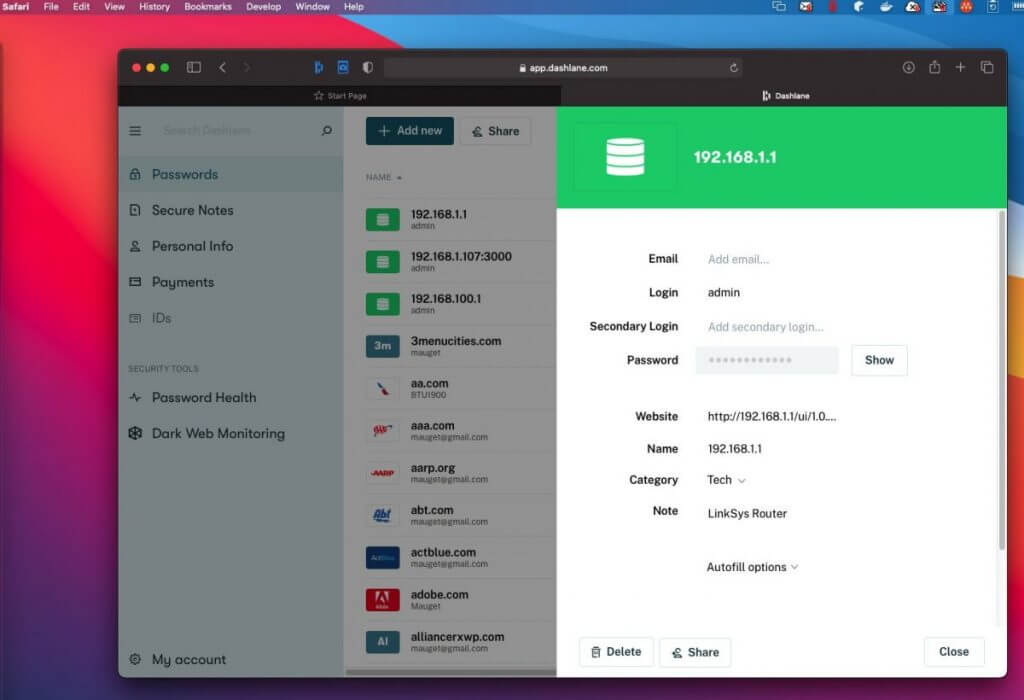

DashLane Password Manager

After the first day of using the M1 with a mouse, keyboard, and monitor (Christmas, 2020), I stashed it, headless, to a desk upstairs. I continued to remote it from my Intel-based MacBook. When I needed a password, I copied it from the DashLane in the MacBook into the M1 via the remote screen-share.

I installed DashLane onto the M1 to make it self-reliant. Password managers are complex. I wondered how it would work out here.

Final verdict: Good. The DashLane installer detected that it was targeting an M1.

It worked flawlessly, syncing passwords across my Dashlane-enabled devices.

Parallels Toolbox

Parallels Toolbox centralizes convenient utility functions like window management, screen capture, movie captures, and video conversion on my MacBook. It’s a Mac utility having no operational relationship to the Parallels Desktop VM.

Parallels spammed me an offer to try-and-buy Parallels Toolbox for Apple M1. I tried and paid $15. I used it for all screen captures in this blog.

GetInfo shows that it’s a Universal App. It’s bit-for-bit identical to the copy I have in my Intel MacBook.

I despaired of Parallels soon producing a VM for the M1. Within days, an offer for the Parallels Desktop M1 eval program arrived.

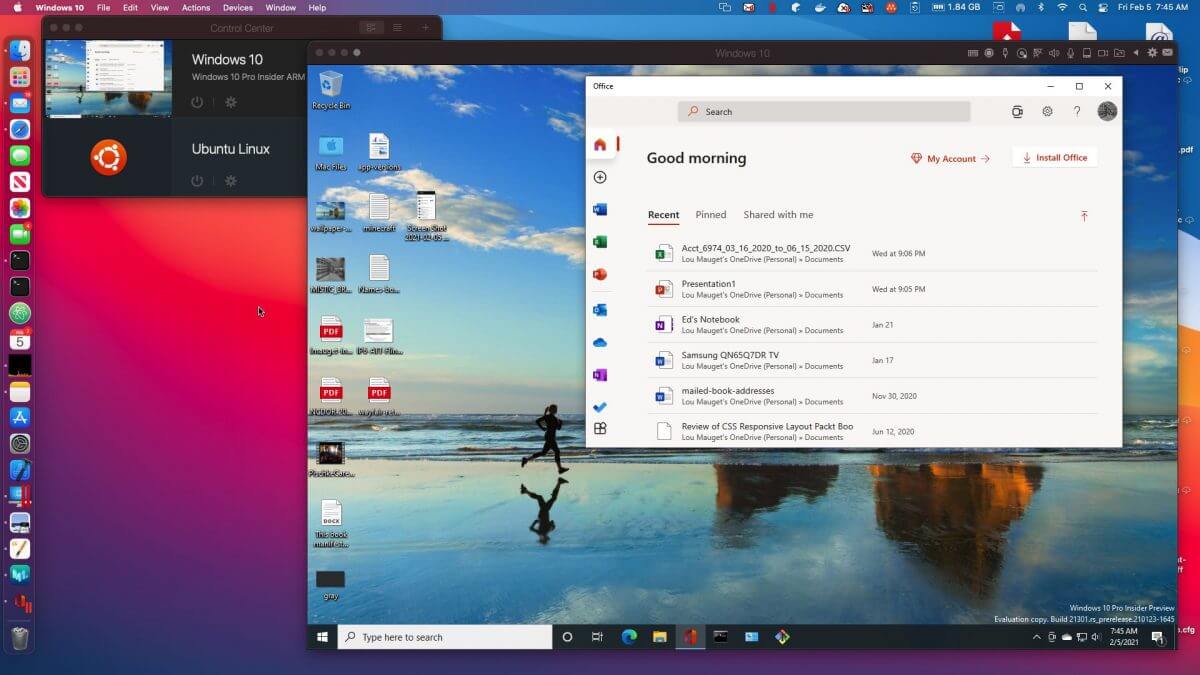

Windows Pro ARM on Parallels Desktop

I learned I could install a “Parallels Desktop for M1” eval copy that would work with a Microsoft Insider Windows 10 Pro ARM. I snagged one free-of-charge as an evaluation.

The installation displayed this popup that told me it knew its target.

Parallels virtualization worked — mostly.

Windows 10 Pro ARM looks exactly like Intel Windows.

Bundled apps such as Calculator and Paint 3D draw a window frame and then disappear, at least in the VM. Each flickering app reminded me of a failed “three to beam up” in Star Trek. Is this an issue from Parallels or from Microsoft? Whoever has the issue will resolve it.

Sidebar: as we go to (Word) press, an update to the Parallels Desktop fixed issues. The network always connects. Each bundled Windows app popped a one-time self-update window as I tried it. Solitaire works.

Here’s a picture of Windows 10 Pro ARM eval running web-based Microsoft Office. Here, all executed in a Parallels Desktop window within the Mac mini M1. It’s a Remote Desktop session to the headless M1 as well.

Wheels within wheels; fires within fires.

If necessary, I can run my toolchain, WebStorm, or VS Code on the Windows VM.

Ubuntu Server 20.04.2 on Parallels VM

Could I stand up Ubuntu Server ARM 20.04.2 in a VM? Yes!

Docker within the virtual Ubuntu worked the “Hello World” container. I configured and ran MiniK8s, a reduced Kubernetes from Canonical. I could query and configure its myriad items.

It was a working, ARM-based Linux in an ARM-based VM hosted on a chunk of Apple Silicon controlled by a major macOS revision.

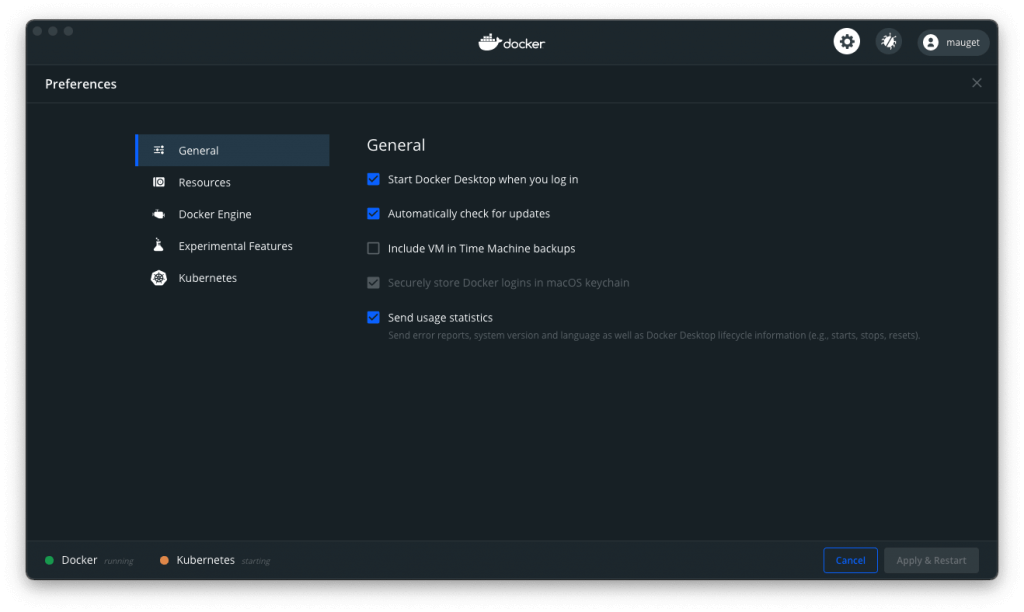

Could I run Docker directly M1 Big Sur?

Docker Tech Preview

Docker published a preview for the M1 after I received mine. It notes caveats.

The installation was a traditional dmg file. I dragged a 1.39 GB glob of Docker to the Applications folder and clicked. Here it is:

The UI suggested a copy-paste, “getting started image” that worked.

I requested an Intel-based busy box from Docker. It downloaded the image and created a container. This completed faster than my Roku switches TV programs. Rosetta handled the AMD64 container. You’ll see the whole thing followed by my ls -la below.

mauget@Louiss-Mac-mini ~ % docker run -it --platform linux/amd64 busybox Unable to find image 'busybox:latest' locally latest: Pulling from library/busybox e5d9363303dd: Pull complete Digest: sha256:c5439d7db88ab5423999530349d327b04279ad3161d7596d2126dfb5b02bfd1f Status: Downloaded newer image for busybox:latest / # ls -la total 44 drwxr-xr-x 1 root root 4096 Jan 30 18:46 . drwxr-xr-x 1 root root 4096 Jan 30 18:46 .. -rwxr-xr-x 1 root root 0 Jan 30 18:46 .dockerenv drwxr-xr-x 2 root root 12288 Jan 12 00:39 bin drwxr-xr-x 5 root root 360 Jan 30 18:46 dev drwxr-xr-x 1 root root 4096 Jan 30 18:46 etc drwxr-xr-x 2 nobody nobody 4096 Jan 12 00:39 home dr-xr-xr-x 122 root root 0 Jan 30 18:46 proc drwx------ 1 root root 4096 Jan 30 18:47 root dr-xr-xr-x 13 root root 0 Jan 30 18:46 sys drwxrwxrwt 2 root root 4096 Jan 12 00:39 tmp drwxr-xr-x 3 root root 4096 Jan 12 00:39 usr drwxr-xr-x 4 root root 4096 Jan 12 00:39 var / #

For a realistic case, I created an nginx server container with a bind mount to serve the React app I created earlier in WebStorm. It worked as expected across machines on my network.

I tried making two busybox containers that shared a common volume. Any edit in that volume by any party reflected to the other parties as expected.

None of the preview’s listed caveats tripped it up. The Docker worked for my range of development.

Now, what about those “engines” on the SoC?

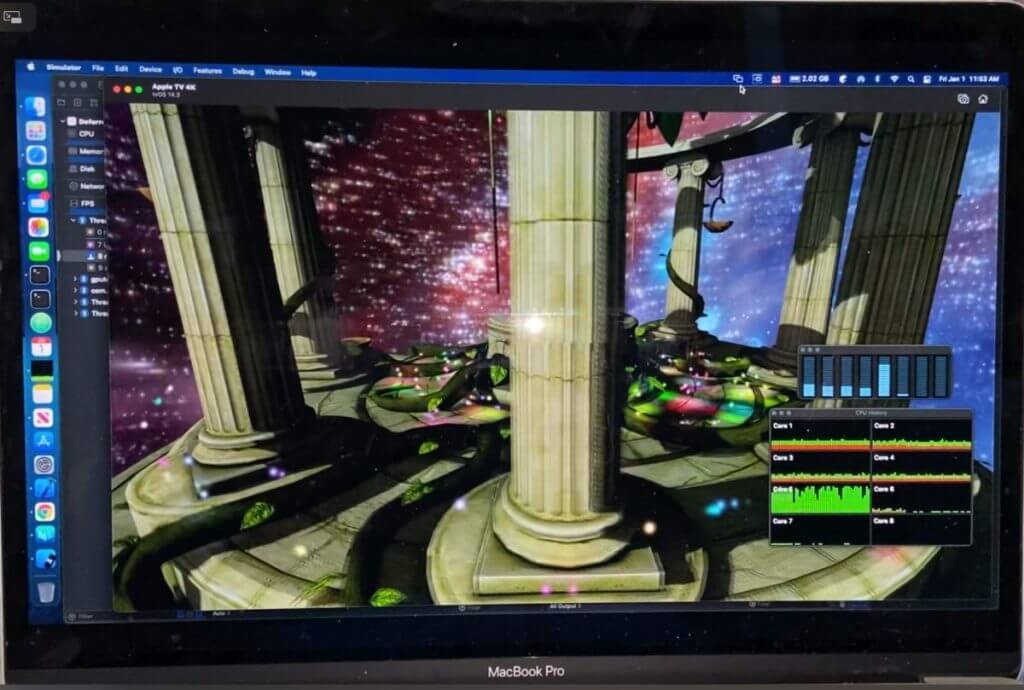

Apple SoC-Integrated Custom GPU

Here’s what it looks like on my screen.

That’s Apple’s deferred lighting Metal sample running on my M1 viewed in a shared desktop session. It’s a bit shaky because it’s rendering over a WiFi network. The rendition is solid when viewed on an attached monitor.

Here’s a phone snapshot of that remote session movie.

Notice the CPU monitors floating at the bottom-right? Those first four short bars are the high-efficiency cores. The tall fifth bar is a performance core that is largely driving only the remote session.

When I run the sample from a connected monitor, keyboard, and mouse, that performance core is flat. Any slight jerks and rips in the movie are products of transmitting the remote session.

Apple Neural Engine

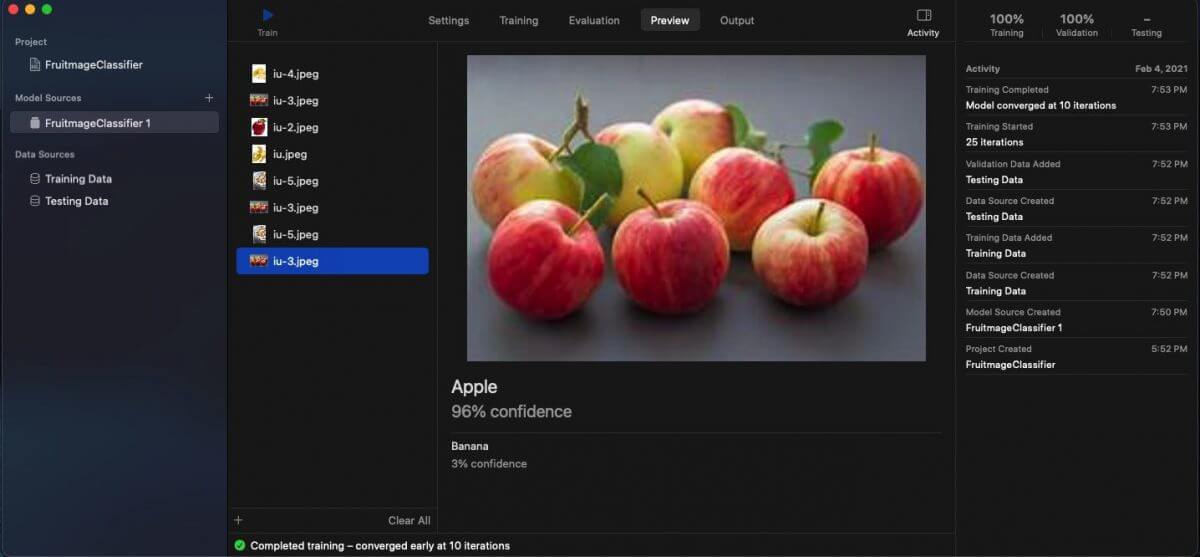

AI classification is magic. I used Xcode’s standard classifier to involve the Neural Engine in building a model that distinguishes apples from bananas.

I found pictures of apples (the fruit) that I placed in a folder named “Apple.” I downloaded banana images to a “Banana” folder. Each folder names its contents. During training, the classifier learns to recognize the pictures in the folder named “Apple” are of apples. Bananas? Corresponding action.

Easy to set up. No coding required. A name determines the classification name of its contents.

I clicked start. Training converged faster than a typical web page paints. It converged in 10 neural network training passes. In other words, variance between test and projected classifications drove to a tiny delta in ten rapid rounds.

To demonstrate the model, I dropped previously unseen images of apples and bananas on the classifier’s UI preview panel. Result? Astounding.

It returned correct confidence levels in the high 90th percentiles or 100%. It even named off-the-wall downloaded images of banana pudding as “Banana” or displayed “Apple” for sliced apples. Its training images included a few pictures like those in its training set.

Just don’t show it a house! It’s only seen bananas and apples in its training.

The Xcode classifier has a pane that shows resource consumption during training. Training didn’t stress the M1.

I began training a model for handwritten numeral identification by training from the MINST training/testing database. That training took 35 minutes to converge. I plan to compare the same task in my Intel machine. This one is a work-in-progress.

TensorFlow is mainstream. Apple forked tensorflow_macos that runs native TensorFlow code by leveraging the Neural Engine. This is a thing to try later.

What about using an M1 for desktop publishing?

Desktop Publishing.

People have used Macs for desktop publishing from Mac’s day one. I recently published a children’s book as a COVID coping diversion. I used Affinity Publisher to create a special PDF to send to the printer.

I noticed Affinity Publisher listed under the App Store heading, “Apple M1.” I carried out a GetInfo drill-down on the copy on my MacBook. Sure enough, it’s a Universal App.

I dragged it through the iCloud to my M1.

Affinity Publisher operated normally. I installed Affinity Designer as well.

Publishing reminded me of office applications. A developer needs Word, Excel, and PowerPoint or software that consumes and exports those kinds of files.

Office

I have a single-CPU license for MS Office on my MacBook, not the M1. I found that the web-based MS Office suite was suitable for my work on the M1.

By this point, I’d populated the M1 with most of the tools I use, but it needed browser diversity.

Firefox and Chrome

I installed Firefox and Chrome. Firefox asked me if I wanted an Apple Silicon download. Chrome did not.

Each installed a Universal App. Each synced my bookmarks and settings. The Firefox sync reordered the bookmark toolbar on my MacBook. Annoying considering I was on the M1.

Speaking of syncing, what about the cloud?

iCloud

Apple’s iCloud contains my MacBook Desktop and Document folders’ files reaching through ancestor Macs from a lineage starting in 2012. No surprise, but those appeared on my M1. I’d signed onto iCloud during the M1’s Big Sur installation.

Seamless! I had to change the wallpaper on the M1 to indicate which desktop I was on. It had duplicated the desktop of my MacBook.

What else? Laptop users care about battery charge life.

Battery

Word on the street touts low battery drain with ARM-based phones and tablets. Blog authors claim the same for the two M1 laptops. Some authors dispute this, but I’ve seen believable comparisons between Apple-Intel to Apple M1 where the M1 hours-per-charge looks better.

The SoC enables shared RAM across GPU and Neural Engine that could mean fewer cycles expended for some work. RISC features of ARM64 could get some credit for battery life as well.

Sidebar: An OoOE pipelined instruction decoder chops fixed-length instructions from an instruction buffer. A fixed-length instruction decoder needs trivial logic to extract a series of RISC instructions. This is more work for a CISC decoder. Intel CISC instruction lengths vary from 1 to 15 bytes. The extraction decomposes each instruction into an ordered list of atomic operations. A flow analysis reorders those operations to overlap some of their execution for an average speed increase.

That’s part of OoOE. Average clock-cycles-per-instruction can be 1 or less in some designs, making for a super-scaler processor.

Four is said to be a practical limit of the number of CISC decoders. Increased extraction complexity could be a power-drain contributor, along with a greater average number of operations per CISC instruction. The lower complexity of RISC decoders seems one factor driving lower battery drain of ARM cores — unless there is a need for more RISC instruction executions to implement a given program.

Battery life is a moot issue for the desktop M1, but I detect nil power-driven heat emission. The M1 case is always metallic-cool. A wet finger detects a slight draft from the fan vent.

I cannot say the same for my Intel-based MacBook Pro.

Summary

And that’s a wrap! Let’s summarize what we’ve covered.

Apple appears to be well-along in transitioning its Mac and MacBook line from an Intel CPU base to its own SoC design. The chip combines licensed ARM64 architecture cores with Apple’s neural, GPU, and security engines probably moved from iPad, iPhone, and Apple TV. A major Big Sur MacOS revision seamlessly handles both Intel and ARM64 applications via Rosetta 2 and Universal App bundles.

We touched on M1 architectural features. I noted erstwhile Intel-based apps and features I tried on a Mac mini M1 purchase:

- FileZilla

- Homebrew

- Yarn, Node, Git, Java, SSL, Python 3 ..

- JetBrains IDEs

- VSCode

- Dashlane

- Parallels Toolbox

- Parallels Desktop

- Windows 10

- Ubuntu Server

- Docker containers

- GPU deferred lighting rendering

- Neural Engine classifier

- Affinity Publisher

- FireFox and Chrome

- iCloud

The M1 line is young. It hit the market a few months ago. Third parties are rapidly producing Universal Apps for the M1, but Intel-based apps run well in the meantime.

I didn’t discuss gaming, a two-monitor limitation, ports, or rumored Bluetooth issues. None of those were issues for me. Battery life is said to be exceptional on M1 MacBooks.

My M1 experiences are positive. It feels like a zippy Intel machine that always runs cool. Apple will announce new offerings.

In the Fall, I’ll trade my Intel MacBook Pro for a new MacBook Pro M1 for development (M2 or Mx?). I’ll hang onto the Mac mini M1 as an additional development machine.