Agentic AI delivery is a software delivery model where autonomous agents execute development tasks inside a governed software development lifecycle (SDLC), operating within architectural guardrails, dependency-ordered backlogs, and test-gated workflows.

In Part 1 and Part 2 of this series, we introduced agentic AI delivery as an enterprise software delivery model and explored how governed, autonomous execution can operate within a traditional SDLC. We described how organizations can structure AI-driven software delivery to preserve traceability, maintain quality gates, and retain architectural control while benefiting from autonomous execution.

This article demonstrates agentic AI delivery in practice. Rather than describing the model conceptually, we implemented a real autonomous execution loop and observed how it behaves inside a governed enterprise SDLC.

Specifically, we ran the Ralph loop across a dependency-ordered backlog of 19 user stories to build a complete full-stack timesheet application with story-level traceability. The goal was not to extend or reinvent the Ralph pattern itself, but to observe how autonomous execution behaves when placed inside a constrained, repeatable, and test-gated delivery system.

The implementation below shows how an AI-accelerated delivery loop performs end-to-end and why governance, intentional sequencing, and architectural constraints are essential for enterprise-ready agentic AI delivery. The full implementation is available in our public repository, which contains the complete example discussed in this article.

What This Agentic AI Delivery Implementation Demonstrates

- Autonomous execution across 19 dependency-ordered user stories

- 1:1 story → test → commit traceability

- Fully test-gated progress inside a governed SDLC

- Observable delivery at every iteration

The Ralph Loop: A Simple Execution Pattern for Agentic AI Delivery

The Ralph loop, originally introduced by Geoffrey Huntley, is a simple agentic execution pattern for applying an AI coding agent against a set of specifications. In our case, we used the Ralph loop to run through 19 dependency-ordered user stories, using it as the execution mechanism for the governed agentic AI delivery model described earlier in this series.

Often just called “Ralph,” the loop is intentionally simple. It’s essentially a brute-force, persistent cycle that works through specifications one at a time. Much like its namesake from The Simpsons, Ralph isn’t clever, but it‘s consistent.

That simplicity is deliberate. It makes agentic AI delivery easier to observe, control, and integrate into a governed software delivery process.

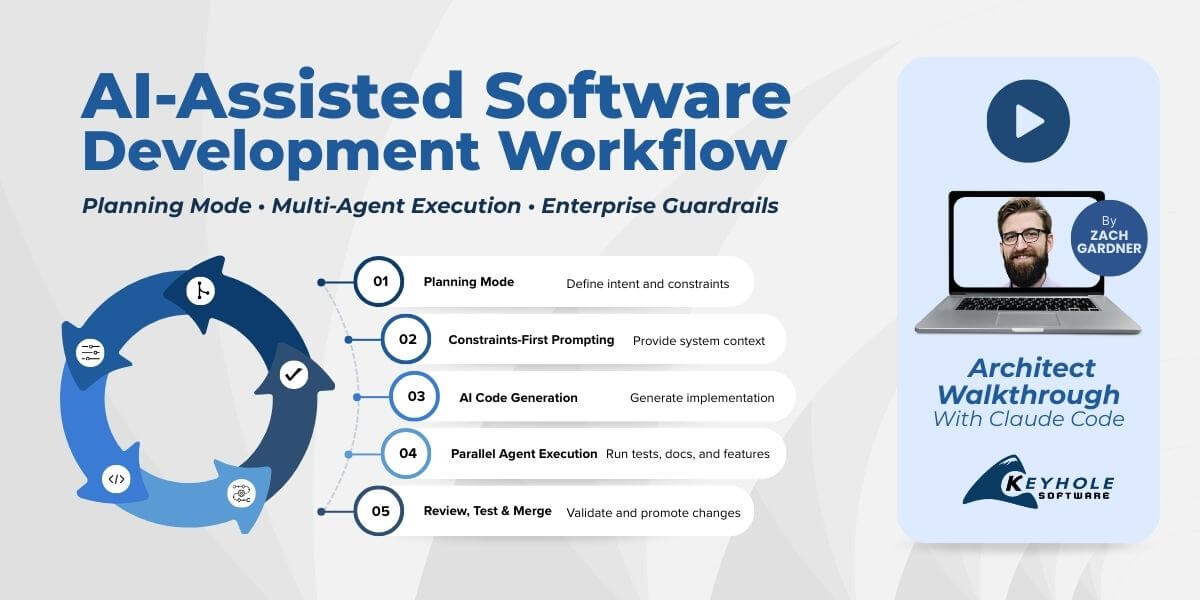

We are actively evaluating the latest agentic coding tools — particularly Anthropic’s Claude Code and OpenAI’s Codex — to understand how autonomous agents behave beyond initial “hello world” implementations and inside real enterprise delivery constraints.

In practice, these tools can be customized to align with an organization’s IT architectures, processes and procedures, tech stacks, lifecycle management, and governance, often directly from the developer’s workstation. This type of structured environment closely mirrors the goals of Platform Engineering, where internal developer platforms provide standardized tooling, guardrails, and automation to improve delivery consistency.

All of this can be done within existing SDLCs. However, producing software faster than traditional requirements gathering and testing processes naturally introduces some friction. We believe that tension will ease as SDLCs evolve from a requirements-first approach to a more intent-driven, code-first model, but that’s a topic for another blog.

This execution pattern demonstrates how agentic AI delivery can operate as a predictable, test-gated delivery system rather than an experimental development workflow.

Invoking the Agent via CLI

Once the Ralph loop is in place, the next piece is how it actually runs. The key is that both Anthropic and OpenAI’s coding agents support non-interactive execution, meaning prompts can be passed in directly as parameters instead of through an interactive session. This makes it possible to invoke an agent as part of an autonomous delivery loop. In the context of agentic AI delivery, this is what allows execution to move from interactive assistance to repeatable automation.

As an example, the following Codex command sends a prompt directly from the command line:

$ codex “Create a Unit Test for the Employee Add Timesheet service”

Context Seeding

Beyond the prompt itself, these agents rely heavily on context. In this case, that context is provided through an AGENTS.md file, which acts like a README for how the agent should operate within the project.

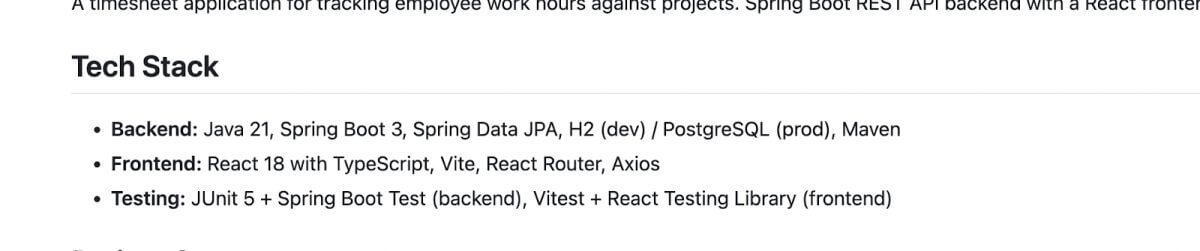

The AGENTS.md file defines the core constraints the agent is expected to work within. This includes the technology stack, architectural assumptions, and testing frameworks that should be used throughout the implementation. Rather than allowing the agent to infer or invent these decisions, they are made explicit up front and supplied as part of the agent’s execution context. For agentic AI delivery to scale, these constraints must be explicit rather than assumed.

Providing this information ahead of time ensures that each iteration starts from the same baseline. The agent is not deciding what frameworks to use or how the system should be structured; it’s operating within a set of predefined choices that reflect how the system would be built by a senior engineering team.

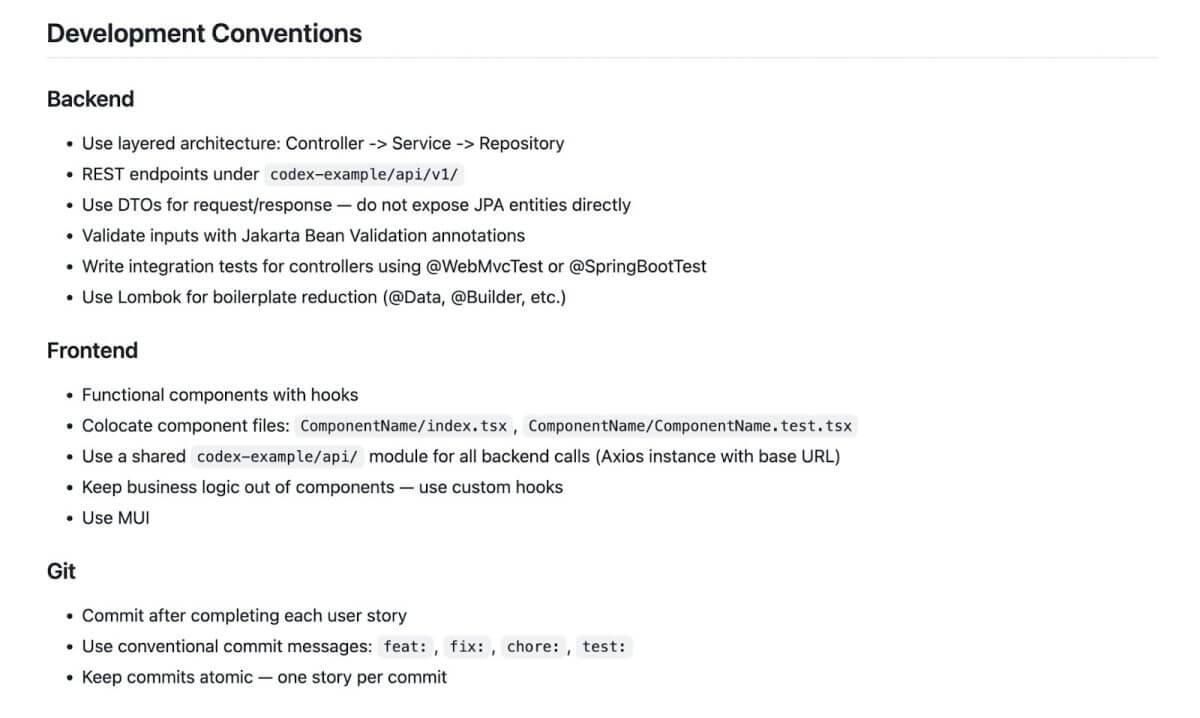

Beyond the stack itself, the agent is also guided by a set of explicit development conventions. These conventions define how code should be organized, validated, tested, and committed, and mirror the standards typically enforced during normal development and code review.

Here are the Development Conventions defined for the agent.

By encoding these conventions directly into the agent’s context, each iteration follows the same architectural and procedural expectations. This keeps execution consistent across stories and reduces variability as the loop progresses, making autonomous delivery more predictable and easier to reason about.

The Autonomous Execution Loop

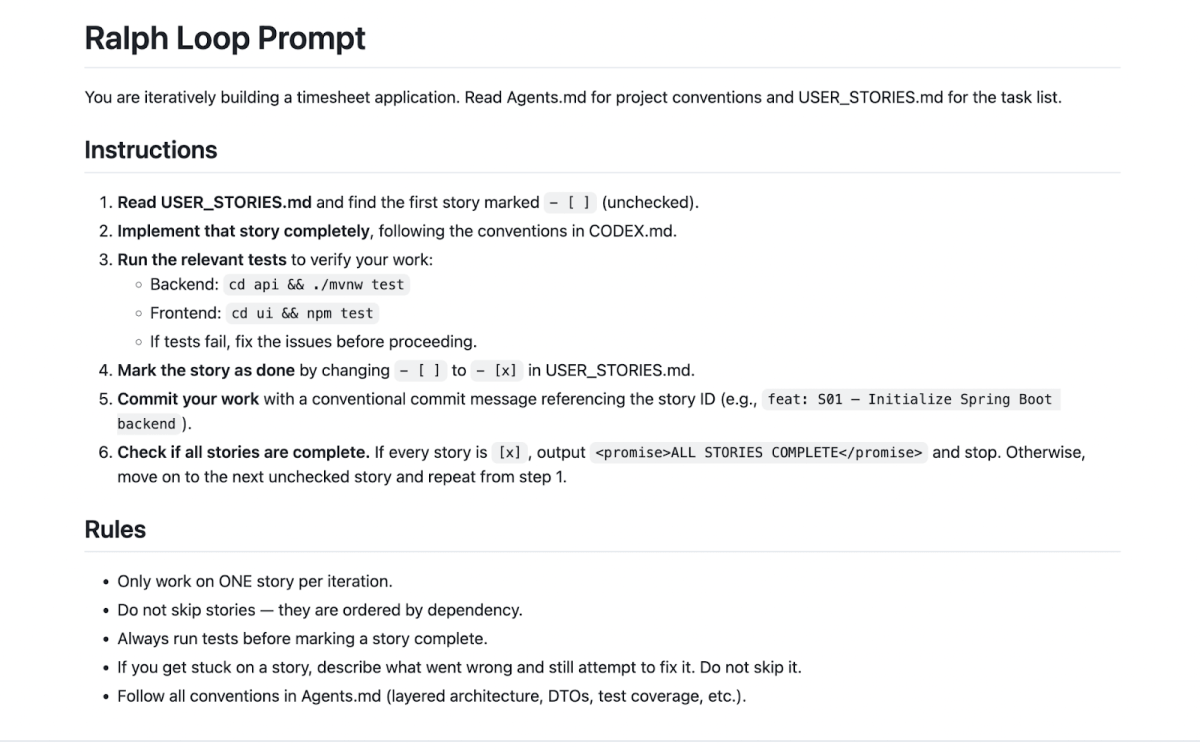

At its core, the loop is essentially a bash script that runs for a defined number of iterations. The behavior of each iteration is controlled by a prompt.md file, which provides the instructions the agent follows every time it executes.

The prompt instructs the loop to read the USER_STORIES.md file, locate the next unchecked story, implement it according to the defined conventions, run the relevant tests, and commit the result. Once a story is completed, it is marked as done so the next iteration can move on to the following item in the list.

This process repeats until all stories are complete or the specified number of iterations has been reached. Each pass through the loop produces a small, discrete unit of progress (one story implemented, tested, and committed) making execution observable and traceable from start to finish. This is what makes agentic AI delivery observable, auditable, and measurable at the story level.

Example Repositories for the Agentic AI Delivery Implementation

The best way to understand how this works is to observe it directly. To make this agentic AI delivery model fully transparent, we published the complete implementation and execution loop in public repositories.

The README files explain how to get everything up and running.

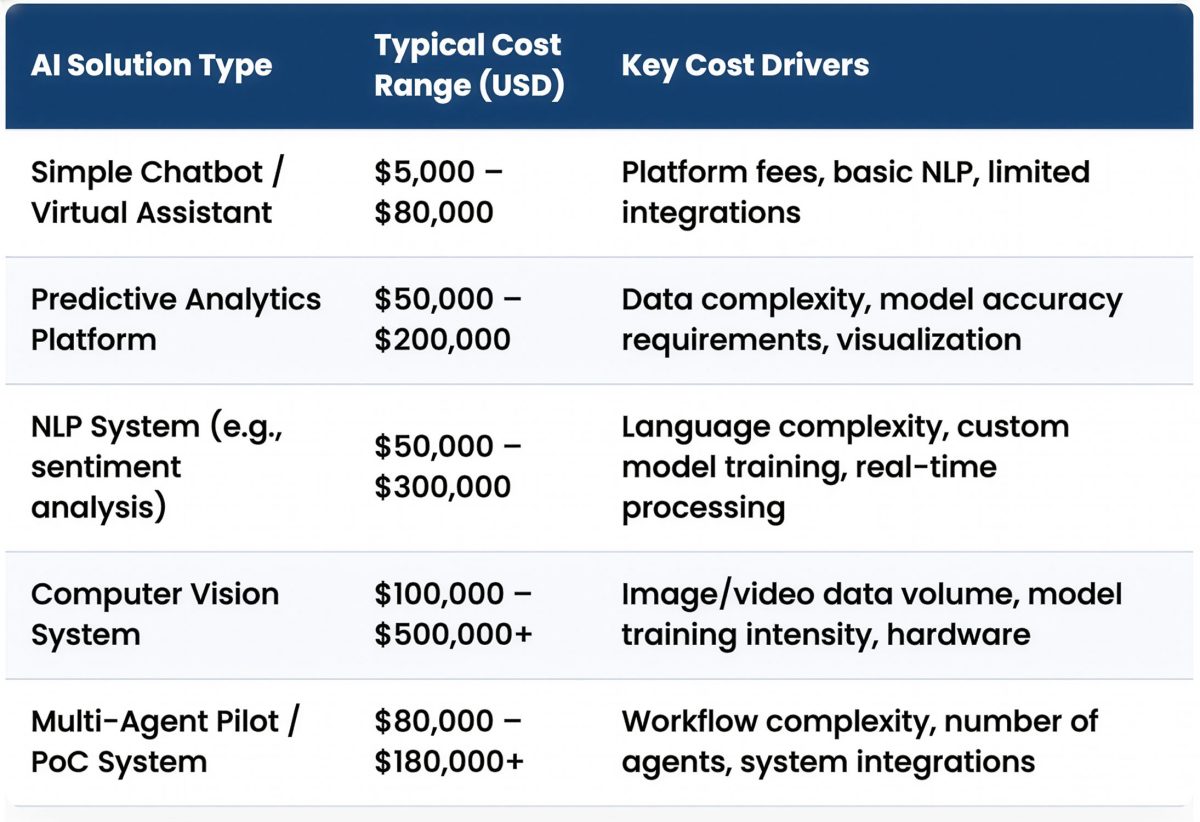

Keep in mind that running these loops requires either a Claude Code or OpenAI account. Each iteration sends tokens to the underlying model, and those tokens are billed according to your subscription plan.

To make this agentic AI delivery model fully transparent, we published the complete implementation and execution loop in public repositories.

Anthropic’s Claude Code

https://github.com/in-the-keyhole/ralph-timesheet

OpenAI’s Codex

https://github.com/in-the-keyhole/ralph-timesheet-codex

What This Implementation Demonstrates About Agentic AI Delivery

This implementation wasn’t about clever prompting or novel agent behavior. It was about seeing whether autonomous execution could run reliably inside a governed delivery system when intent, constraints, and sequencing were made explicit.

By running a simple, persistent execution loop against a dependency-ordered backlog, we were able to see how agentic delivery behaves when architectural standards, testing expectations, and commit discipline are enforced from the start. Each iteration produced a small, test-gated unit of progress that was visible and traceable end to end.

The takeaway is straightforward: the agentic AI delivery model described earlier in this series holds up when applied to real software delivery work. Autonomous agents can execute real delivery work predictably when the surrounding system is structured intentionally.

Why Agentic AI Delivery Works in Enterprise SDLCs

In this series, we introduced agentic AI delivery as an enterprise software delivery model, explored how governed autonomous execution can operate within the SDLC, and then implemented that model using a real agentic execution loop.

The implementation here is intentionally simple. It does not rely on clever prompting or experimental tooling. Instead, it works because the surrounding system is structured deliberately. The constraints are explicit, the backlog is dependency-ordered, the tests are enforced, and the agent operates within clearly defined architectural boundaries. That structure is what allows autonomous execution to produce reliable, observable progress.

What this experiment demonstrates is that agentic AI delivery is not primarily about the intelligence of the agent itself. It is about the delivery system the agent operates within. When intent, sequencing, and governance are clearly defined, autonomous agents can execute meaningful delivery work in a predictable and traceable way.

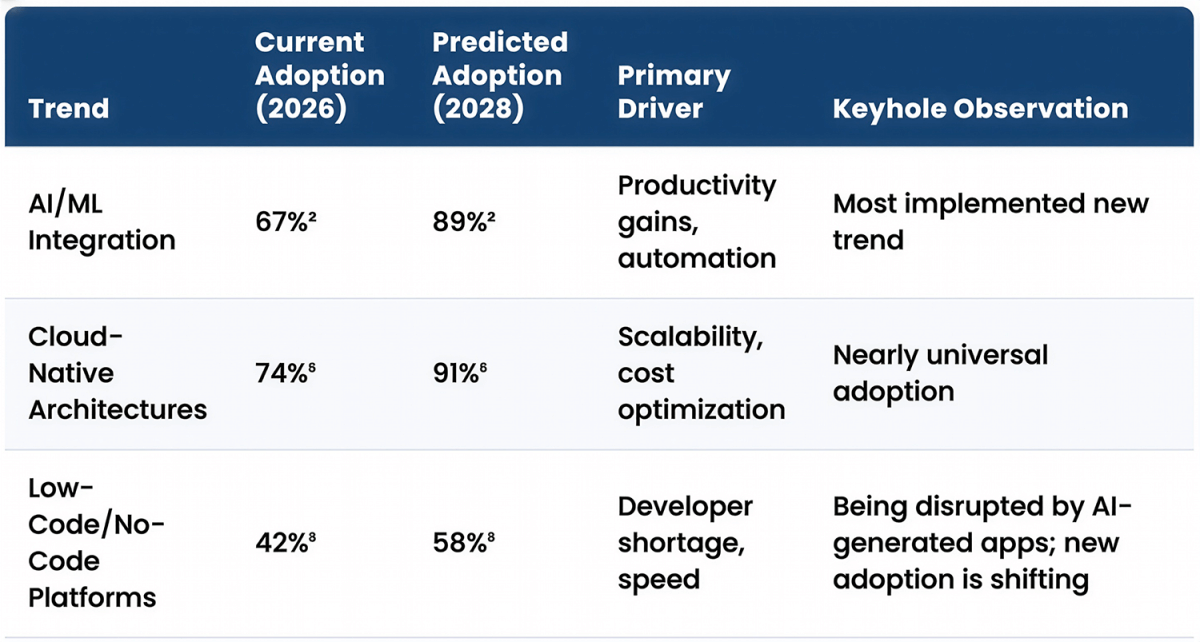

For organizations already investing in modern delivery capabilities such as Cloud-Native Enterprise Delivery, these patterns may represent a natural extension of existing pipelines and automation strategies. Similarly, teams working through Legacy Modernization efforts may find that governed autonomous execution can accelerate well-scoped improvements while still maintaining architectural discipline.

The full example implementation discussed in this article, including the Ralph loop and the dependency-ordered backlog used to build the timesheet application, is available in the public repository, for readers who want to explore or run the experiment themselves.

As AI development tools continue to evolve, the organizations that benefit most will likely be those that focus less on clever prompts and more on intentionally designed delivery systems. When those systems make intent, execution, and validation explicit, agentic AI delivery becomes a practical and scalable extension of modern enterprise SDLCs.

More From David Pitt

About Keyhole Software

Expert team of software developer consultants solving complex software challenges for U.S. clients.